Contrast Tests for Genetic Interactions: A Comprehensive Guide to Methods, Applications, and Best Practices

Understanding genetic interactions is crucial for unraveling the complex architecture of diseases and traits, yet their detection poses significant statistical and computational challenges.

Contrast Tests for Genetic Interactions: A Comprehensive Guide to Methods, Applications, and Best Practices

Abstract

Understanding genetic interactions is crucial for unraveling the complex architecture of diseases and traits, yet their detection poses significant statistical and computational challenges. This article provides a comprehensive overview for researchers and drug development professionals on the landscape of contrast tests for genetic interactions. We explore the foundational principles of genetic interaction, survey a wide array of methodological approaches from traditional statistical tests to advanced machine learning and network-based frameworks, address critical troubleshooting and optimization strategies for real-world application, and provide a comparative analysis of method performance. By synthesizing insights from recent methodological advances and large-scale applications, this guide aims to equip scientists with the knowledge to select, implement, and validate appropriate interaction detection methods for their specific research contexts, ultimately accelerating the discovery of novel biological insights and therapeutic targets.

The Foundation of Genetic Interactions: From Biological Concepts to Analytical Challenges

Epistasis, a concept introduced by William Bateson over a century ago, describes the phenomenon where the effect of one gene is dependent on the presence of one or more modifier genes [1]. This genetic interaction has been fundamentally important for understanding both the structure and function of genetic pathways and the evolutionary dynamics of complex genetic systems [1]. In the context of complex human diseases, epistasis has been increasingly recognized for its ubiquity and its critical role in susceptibility to common conditions such as Alzheimer's disease [2]. The advent of high-throughput functional genomics and systems biology approaches has generated a renewed appreciation for studying these gene interactions in a unified, quantitative manner to unravel the complex genetic architecture underlying disease susceptibility and progression [1].

The terminology surrounding epistasis has evolved into three major categories, each with distinct implications for research methodologies. Compositional epistasis refers to the traditional usage where one allelic effect is blocked by an allele at another locus, requiring combinatorial substitution of alleles against a standard background [1]. Statistical epistasis, derived from R.A. Fisher's work, describes the average deviation of combinations of alleles at different loci estimated over all other genotypes present within a population [1]. This statistical framework is particularly relevant for genome-wide association studies (GWAS) of complex diseases, where enumerating all possible genetic interactions is impossible. A third category, functional epistasis, describes molecular interactions between proteins and other genetic elements, though this usage is sometimes discouraged in favor of more specific terms like "protein-protein interaction" to maintain clarity [1].

Contrast Testing Approaches in Epistasis Research

Methodological Framework for Detecting Epistasis

The detection of epistasis in complex disease architecture relies on multiple methodological approaches, each with distinct strengths and applications. For quantitative phenotypes, six epistasis detection methods have been systematically evaluated: EpiSNP, Matrix Epistasis, MIDESP, PLINK Epistasis, QMDR, and REMMA [2]. These tools employ different statistical frameworks to identify gene-gene interactions, with performance varying significantly across interaction types. Simulation studies modeling various pairwise interactions between disease-associated SNPs—including dominant, multiplicative, recessive, and XOR interactions—reveal that each tool exhibits strong performance for certain interaction types but weaker performance for others [2].

Traditional GWAS methodologies that test one marker at a time have largely ignored the complex genomic context of disease susceptibility [3]. Given the polygenic nature of complex diseases, disease risk likely emerges from synergistic results of multiple genes operating within biological networks [3]. Statistical methods for detecting genetic interactions between two single markers generally fall into two categories: logistic regression methods that directly relate disease risks to two genetic markers in a prospective fashion, and linkage disequilibrium (LD)-based methods that retrospectively detect genetic interactions by comparing "association" between two markers in case and control populations [3]. Despite their different motivations, these two categories of methods are closely related analytically.

Performance Comparison of Epistasis Detection Methods

Table 1: Performance comparison of epistasis detection methods across interaction types

| Method | Overall Detection Rate | Dominant Interactions | Recessive Interactions | Multiplicative Interactions | XOR Interactions |

|---|---|---|---|---|---|

| MDR | 60% | Information not available in search results | Information not available in search results | 54% | 84% |

| MIDESP | Information not available in search results | Information not available in search results | Information not available in search results | 41% | 50% |

| PLINK Epistasis | Information not available in search results | 100% | Information not available in search results | Information not available in search results | Information not available in search results |

| Matrix Epistasis | Information not available in search results | 100% | Information not available in search results | Information not available in search results | Information not available in search results |

| REMMA | Information not available in search results | 100% | Information not available in search results | Information not available in search results | Information not available in search results |

| EpiSNP | 7% | Information not available in search results | 66% | Information not available in search results | Information not available in search results |

Table 2: Tests for gene-gene interaction in genome-wide association studies

| Test Category | Specific Tests | Key Features | Applicability |

|---|---|---|---|

| Logit-based Tests | Tlogit, TOR | Directly model disease risk with genetic markers; prospective approach | Testing interaction between two single markers |

| LD-based Tests | TLD, TLD*, TCLD | Compare association between two markers in case and control groups; retrospective approach | Detecting interaction between two unlinked loci |

| Case-only Statistics | TPearson, TLDc, TORc | Leverage special features of GWAS data to increase statistical power | Screening for interactions genome-widely |

| Overall Association Tests | Tlogisticall, Tχ2, Tkernel | Detect association signals of a pair of loci allowing for both interaction and main effects | Detecting overall association in presence of interactions |

Recent benchmarking studies have revealed that no single method consistently outperforms others across all types of epistasis [2]. This performance variability, combined with the reality that specific types of epistasis present in a dataset are often unknown, suggests that using multiple epistasis detection algorithms in combination may be more effective for obtaining comprehensive results than relying on any single method [2]. For example, while MDR achieved the highest overall detection rate of 60% in simulation studies, it was particularly effective for XOR interactions (84% detection rate) but less sensitive to other interaction types [2]. Conversely, EpiSNP had the lowest overall detection rate (7%) but was particularly effective for detecting recessive interactions (66% detection rate) [2].

Experimental Protocols for Epistasis Detection

Protocol 1: Genome-Wide Epistasis Screening Using Regression-Based Methods

This protocol outlines the steps for conducting genome-wide epistasis screening using regression-based approaches, which form the foundation for many epistasis detection strategies in complex disease research.

Materials and Reagents:

- Genotype data from GWAS (quality-controlled)

- Phenotype data (quantitative or binary)

- High-performance computing resources

- Statistical software (R, Python, or specialized epistasis detection tools)

Procedure:

- Data Preparation and Quality Control: Filter genetic markers based on standard GWAS quality control metrics, including call rate (>95%), minor allele frequency (>1%), and Hardy-Weinberg equilibrium (p > 1×10^-6). Impute missing genotypes using reference panels.

- Covariate Adjustment: Adjust phenotype data for relevant covariates such as age, sex, and principal components to account for population stratification.

- Model Specification: Implement the regression model for epistasis detection. For quantitative phenotypes, use the linear regression framework: Y = β0 + β1G1 + β2G2 + β3G1×G2 + ε, where Y is the phenotype, G1 and G2 are genotype matrices, and G1×G2 represents the interaction term.

- Significance Testing: Assess the statistical significance of the interaction term (β3) using appropriate multiple testing correction methods (e.g., Bonferroni, false discovery rate).

- Validation: Replicate significant interactions in independent cohorts and perform functional validation where possible.

Troubleshooting Tips:

- Computational demands can be substantial for genome-wide epistasis scans. Consider using efficient algorithms like BOOST for initial screening [3].

- Collinearity between main effects and interaction terms can inflate standard errors. Centering variables before creating interaction terms can mitigate this issue.

- Population stratification can create spurious epistatic signals. Always include principal components as covariates in the model.

Protocol 2: Biological Validation of Epistatic Interactions

This protocol describes approaches for validating statistically identified epistatic interactions through biological experiments, using rice heading date genes as an exemplar [4].

Materials and Reagents:

- Near-isogenic lines (NILs) differing at target quantitative trait genes (QTGs)

- Yeast two-hybrid system components

- Split-luciferase complementation assay reagents

- Plant growth facilities or appropriate biological system for phenotype assessment

Procedure:

- Development of Near-Isogenic Lines: Create NILs that differ specifically at the identified QTGs through repeated backcrossing and marker-assisted selection.

- Phenotypic Characterization: Measure the target phenotype (e.g., heading date in rice) and related agronomic traits across different NILs under controlled conditions.

- Physical Interaction Testing:

- Yeast Two-Hybrid Assay: Clone coding sequences of identified QTGs into both bait and prey vectors. Co-transform into yeast reporter strains and assess interaction through growth on selective media and reporter gene activation.

- Split-Luciferase Complementation Assay: Fuse QTGs to complementary fragments of luciferase and co-express in an appropriate system. Measure luciferase activity as an indicator of protein-protein interaction.

- Epistatic Network Construction: Integrate statistical and experimental evidence to construct a comprehensive genetic interaction network, highlighting key hubs and interactions.

Troubleshooting Tips:

- For weak or transient interactions, consider alternative methods like co-immunoprecipitation.

- Include both positive and negative controls in all interaction assays.

- Account for genetic background effects when interpreting results from NILs.

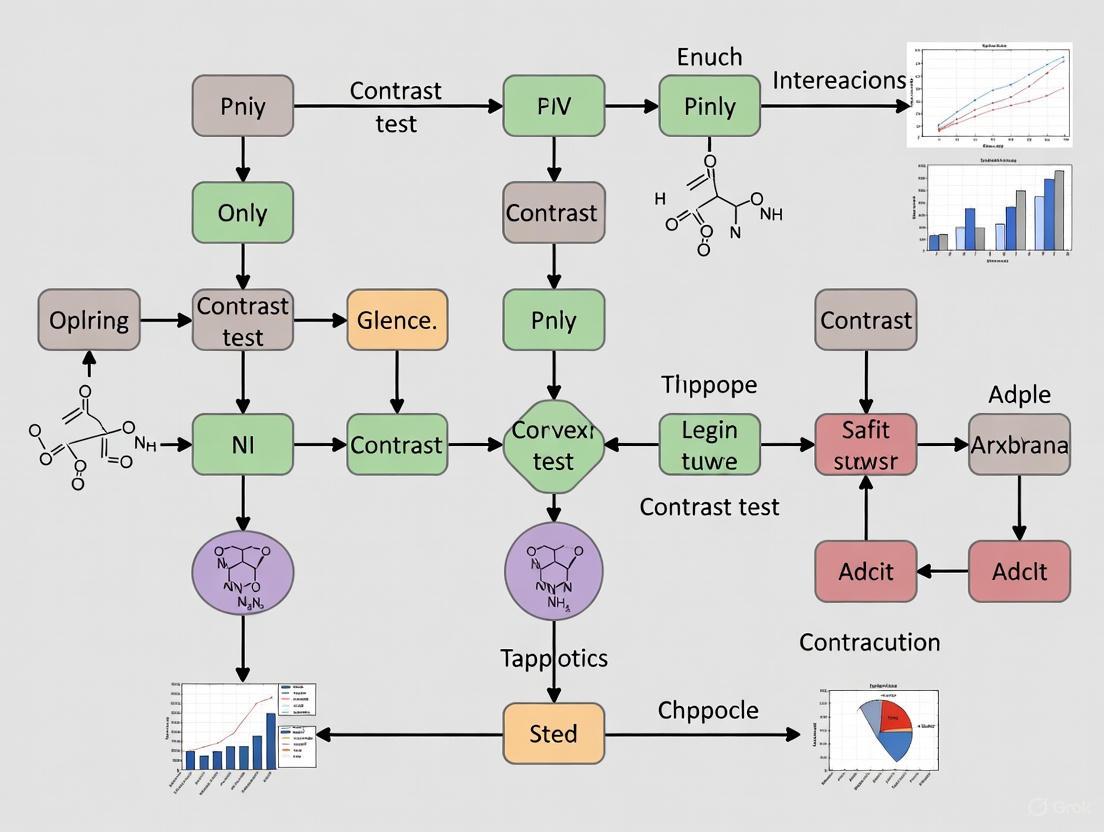

Visualization of Epistasis Research Workflows

Diagram 1: Comprehensive workflow for epistasis detection and validation in complex disease research. The process begins with data collection and quality control, proceeds through method selection and epistasis detection, and culminates in statistical and biological validation before network construction and biological interpretation.

Table 3: Key research reagent solutions for epistasis studies

| Category | Specific Tool/Resource | Function/Application | Example Use Cases |

|---|---|---|---|

| Software Tools | PLINK Epistasis | Genome-wide interaction analysis for quantitative phenotypes | Detection of dominant interactions [2] |

| Matrix Epistasis | Efficient matrix-based computations for epistasis detection | Large-scale GWAS with quantitative traits [2] | |

| MDR | Non-parametric method for detecting gene-gene interactions | Detection of XOR and multiplicative interactions [2] | |

| MIDESP | Multifactor dimensionality reduction for quantitative phenotypes | Identification of complex interactions in large datasets [2] | |

| Biological Validation | Near-isogenic Lines (NILs) | Validation of epistatic effects in controlled genetic backgrounds | Confirmation of QTG interactions in rice [4] |

| Yeast Two-Hybrid System | Detection of physical protein-protein interactions | Testing direct molecular interactions between gene products [4] | |

| Split-Luciferase Complementation | Assessment of protein interactions in living cells | Validation of putative epistatic partners [4] | |

| Data Resources | Adolescent Brain Cognitive Development (ABCD) Dataset | Real-world dataset for method validation | Application to externalizing behavior phenotype [2] |

| EpiGEN | Simulated dataset generation for method benchmarking | Performance evaluation across interaction types [2] |

Advanced Applications and Future Directions

The integration of epistasis detection into broader genomic analyses represents a promising frontier in complex disease research. Recent approaches that connect gene-environment interactions with Mendelian randomization frameworks demonstrate how interaction analysis can be enhanced through methodological innovation [5]. These approaches screen for interactions across the genome by identifying genetic variants that depart from the expected relationship between marginal and main effects, effectively testing for the combined effect of G×E interaction and mediation [5].

In agricultural genomics, the construction of genetic interaction networks for quantitative traits like rice heading date has demonstrated the practical utility of epistasis research [4]. These networks reveal that epistatic effects can account for approximately 12.5% of additive effects of identified QTL, highlighting their substantial contribution to phenotypic variation [4]. Furthermore, the discovery that interacting QTG pairs often influence multiple agronomic traits underscores the pleiotropic nature of epistatic networks and their importance for comprehensive understanding of complex traits [4].

Future directions in epistasis research will likely focus on integrating multi-omics data, developing more powerful statistical methods that account for biological context, and creating unified frameworks that bridge statistical epistasis with biological mechanism. As these advancements mature, contrast testing approaches for genetic interactions will become increasingly sophisticated, ultimately enhancing our ability to decipher the complex genetic architecture of human diseases and agriculturally important traits.

The Problem of Missing Heritability and the Role of Interactions

The phenomenon of "missing heritability" presents a significant challenge in human genetics. Genome-wide association studies (GWAS) have successfully identified numerous variants associated with common diseases and traits, yet these discoveries typically explain only a minority of the heritability estimated from family and twin studies [6]. While undiscovered variants certainly account for some of this gap, a substantial portion may arise from genetic interactions that create what has been termed "phantom heritability" – heritability that appears missing because current estimation methods are inflated by unaccounted interaction effects [6] [7]. This application note examines contrast test approaches for detecting and characterizing these interactions, providing methodological guidance for researchers investigating complex genetic architectures.

Defining Genetic Interactions and Phantom Heritability

Statistical and Biological Definitions of Interaction

Genetic interactions (epistasis) occur when the combined effect of variants at two or more loci deviates from the expected additive combination of their individual effects [8] [9]. In quantitative terms, if the fitness (or disease risk) of a double mutant differs significantly from the product of the individual single mutant fitness values, a genetic interaction is present [8].

- Negative interactions: Double mutation leads to a more severe fitness defect than expected (e.g., synthetic sickness/lethality)

- Positive interactions: Double mutation leads to a less severe effect than expected (e.g., suppression) [8] [9]

The Phantom Heritability Concept

The proportion of heritability explained by known variants (πexplained) is calculated as h²known/h²all, where h²known is the heritability due to known variants (calculated from their observed effects) and h²all is the total heritability inferred from population data [6]. Current estimators of h²all often assume a purely additive genetic architecture, which can be severely inflated when interactions are present, thereby creating the illusion of "missing" heritability even when all relevant variants have been identified [6] [7].

Table 1: Comparative Heritability Explanations for Crohn's Disease Under Different Genetic Architecture Models

| Genetic Architecture Model | Heritability Explained by Known Loci | Phantom Heritability | Portion of Missing Heritability Accounted For |

|---|---|---|---|

| Strictly Additive | 21.5% | 0% | 0% |

| Limiting Pathway (LP) with 3-way Interactions | 21.5% | 62.8% | 80% |

For complex diseases like Crohn's disease (with 71 identified risk loci), interactions among just three pathways could explain approximately 80% of the currently missing heritability [6].

Statistical Testing Approaches for Genetic Interactions

Methodological Categories for Interaction Detection

Table 2: Statistical Methods for Detecting Gene-Gene Interactions in GWAS

| Method Category | Representative Tests | Key Features | Applicable Scenarios |

|---|---|---|---|

| Regression-Based | Tlogit, TOR [3] | Tests specific parameters in logistic regression models; prospective approach | Testing pre-specified interactions with adequate sample size |

| LD-Based | TLD, TLD*, TCLD [3] | Compares linkage disequilibrium patterns in cases vs. controls; retrospective approach | Genome-wide screening for interactions between unlinked loci |

| Case-Only | TPearson, TLDc, TORc [3] | Enhanced power under certain assumptions | Initial screening when specific interactions are not hypothesized |

| Machine Learning | Visible Neural Networks [10] | Detects non-linear interactions without pre-specification; handles high-dimensional data | Large datasets with complex interaction patterns |

| Network-Based | E-MAP, SGA [8] [9] | Quantitative interaction mapping using mutant libraries | Model organisms with available mutant collections |

Protocol: Logistic Regression Testing for Gene-Gene Interaction

Purpose: To detect statistical interaction between two genetic markers in their association with a binary trait.

Materials:

- Genotype data for two markers (coded as 0, 1, 2 indicating allele count)

- Phenotype data (coded as 0 for controls, 1 for cases)

- Statistical software with logistic regression capabilities (R, PLINK, Python)

Procedure:

- Data Preparation: Ensure quality control of genotype data (Hardy-Weinberg equilibrium, missingness, minor allele frequency)

- Model Specification:

- Fit a logistic regression model including both main effects and their interaction term:

- logit(P(D=1)) = β0 + β1G1 + β2G2 + β3G1G2

- Where G1 and G2 are genotype values for the two markers

- Hypothesis Testing:

- Test the null hypothesis H0: β3 = 0 using a likelihood ratio test or Wald test

- Apply appropriate multiple testing correction for genome-wide analyses

- Interpretation:

- A significant interaction (after correction) indicates the effect of one marker depends on the genotype at the other marker

- Calculate odds ratios for different genotype combinations to characterize the nature of the interaction

Considerations: This approach tests for multiplicative interaction on the odds ratio scale. For common outcomes, interactions on the additive scale may be more relevant to public health [3].

Experimental Protocols for Genetic Interaction Mapping

High-Throughput Genetic Interaction Screening in Model Organisms

Purpose: To systematically identify genetic interactions across a defined set of genes using double mutant analysis.

Materials:

- Ordered array of mutant strains (e.g., yeast deletion collection)

- Query mutant strain with selectable marker

- Robotic pinning tools for high-density arrays

- Automated imaging system for colony size quantification

- Appropriate growth media and conditions

Procedure:

- Crossing Procedure:

- Mate query strain with array of mutant strains using robotic pinning

- Select for diploids containing both mutations

- Sporulation and Selection:

- Induce sporulation to generate haploid progeny

- Select for double mutants using appropriate markers

- Fitness Measurement:

- Grow double mutants in competitive culture or as individual colonies

- Quantify fitness using colony size measurements or barcode abundance

- Interaction Scoring:

- Calculate expected double mutant fitness as the product of individual mutant fitness values

- Compute interaction score as: ε = Wab(observed) - Wab(expected)

- Where Wab represents fitness of the double mutant

- Statistical Analysis:

Diagram: High-Throughput Genetic Interaction Screening Workflow

Protocol: BEAN-Counter Analysis for Barcode-Based Interaction Screens

Purpose: To analyze genetic interaction data from multiplexed barcode sequencing experiments.

Materials:

- Pooled mutant library with unique DNA barcodes

- Multiplexed sequencing data from treatment and control conditions

- BEAN-counter software pipeline (https://github.com/csbio/BEAN-counter)

- Reference file of expected barcode sequences

Procedure:

- Sequence Processing:

- Parse raw sequencing data to determine barcode and index tag abundances

- Match observed sequences to reference barcodes allowing for user-specified edit distance

- Exclude amplicons that match equally well to multiple reference sequences

- Quality Control:

- Remove mutants and conditions that fail quality thresholds

- Filter based on read count thresholds and replicate consistency

- Interaction Scoring:

- Compute logged abundance profiles for each condition

- Perform LOWESS normalization against the mean profile from negative control conditions

- Calculate deviations from the expected abundance based on the LOWESS curve

- Compute interaction z-score by dividing deviations by estimated standard deviation

- Batch Effect Correction:

- Identify and visualize systematic technical effects

- Apply batch correction algorithms to remove unwanted variance

- Successively remove strongest uninformative signals from the dataset [11]

Advanced Approaches: Machine Learning for Interaction Detection

Visible Neural Networks for Epistasis Detection

Purpose: To detect non-linear genetic interactions using interpretable neural network architectures incorporating biological prior knowledge.

Materials:

- Genotype data (SNP arrays or sequencing)

- Gene and pathway annotation databases

- Visible neural network framework (e.g., GenNet)

- High-performance computing resources for model training

Procedure:

- Network Architecture Design:

- Structure network layers according to biological hierarchy (SNP → gene → pathway)

- Implement one-hot or additive encoding for genotype inputs

- Include multiple filters per gene to capture different interaction patterns

- Model Training:

- Train network to predict disease status or quantitative trait

- Use appropriate regularization to prevent overfitting

- Validate model performance on independent test set

- Interaction Extraction:

- Apply interpretation methods (Neural Interaction Detection, PathExplain, Deep Feature Interaction Maps)

- Identify significant interacting pairs using permutation testing

- Validate discovered interactions using traditional statistical methods [10]

Diagram: Visible Neural Network Architecture for Genetic Interaction Detection

Table 3: Key Research Reagents and Resources for Genetic Interaction Studies

| Resource Type | Specific Examples | Function/Application | Key Features |

|---|---|---|---|

| Mutant Collections | Yeast Deletion Collection [8], E. coli Keio Collection [11] | Systematic analysis of gene function across the genome | Arrayed format, verified mutations, common genetic background |

| Barcoded Libraries | S. cerevisiae TAG collection [11] | Pooled fitness screens using barcode sequencing | Unique molecular barcodes, common primer sites |

| Software Pipelines | BEAN-counter [11], GenNet [10] | Analysis of sequencing-based interaction data | Barcode quantification, interaction scoring, batch correction |

| Experimental Platforms | SGA [9], E-MAP [8], dSLAM [9] | High-throughput genetic interaction mapping | Automated procedures, quantitative fitness measurements |

| Interaction Databases | BioGRID, GIANT [9] | Curated repository of known genetic interactions | Literature curation, standardized formats |

The problem of missing heritability remains a significant challenge in human genetics, but evidence increasingly suggests that genetic interactions play a substantial role in creating phantom heritability. Contrast test approaches ranging from traditional statistical methods to advanced machine learning techniques provide powerful tools for detecting and characterizing these interactions. The protocols outlined here offer researchers multiple entry points for investigating genetic interactions in their systems of interest, with appropriate method selection depending on the organism, scale, and specific research questions. As these approaches continue to evolve, they promise to reveal the complex genetic architectures underlying human diseases and traits, ultimately bridging the gap between known variants and estimated heritability.

The identification of gene-gene and gene-environment interactions is fundamental to unraveling the "missing heritability" in complex traits. However, genome-wide interaction studies (GWIS) face three formidable challenges: low statistical power due to weak effect sizes and stringent significance thresholds, the severe burden of multiple testing corrections for millions of variant pairs, and the immense computational load of exhaustive searches. This Application Note details these challenges and presents established and emerging protocols—including sequential testing, model-based multifactor dimensionality reduction (MB-MDR), and Mendelian Randomization-based screening—to enhance the detection of genetic interactions in large-scale association studies. Designed for researchers and drug development professionals, the note provides actionable methodologies, reagent solutions, and visual workflows to integrate into a broader research program on contrast test approaches.

Despite the success of Genome-Wide Association Studies (GWAS) in identifying single-nucleotide polymorphisms (SNPs) associated with complex diseases, a significant portion of the estimated heritability remains unexplained. Gene-gene (G×G) and gene-environment (G×E) interactions are plausible explanations for this "missing heritability" [12]. However, moving from single-variant analysis to the study of interactions imposes unique statistical and computational hurdles. The core challenges are:

- Low Statistical Power: Interaction effects are often small, and their detection is highly dependent on the chosen genetic model and link function, which are usually unknown a priori [13].

- Multiple Testing Burden: Testing all possible pairs of variants from a genome-wide set of hundreds of thousands of SNPs involves billions of statistical tests. Controlling the family-wise error rate (FWER) using a standard Bonferroni correction leads to an exceedingly stringent significance threshold (e.g., ~10⁻¹³), making it difficult to detect true interactions [12] [14].

- Computational Intensity: Exhaustively testing all variant pairs using generalized linear models (GLMs), which are fitted iteratively, requires immense computational resources and time, often rendering full genome-wide scans infeasible [12] [13].

This note details protocols and solutions to mitigate these challenges, enabling more powerful and efficient genetic interaction analyses.

The table below summarizes the key quantitative aspects of the primary challenges in genetic interaction research.

Table 1: Key Statistical and Computational Challenges in Genome-Wide Interaction Studies

| Challenge | Underlying Cause | Typical Magnitude in GWIS | Primary Consequence |

|---|---|---|---|

| Statistical Power | Small effect sizes of interactions; Model mis-specification (link function, scale) [13]. | Effect sizes smaller than marginal effects; Power depends strongly on correct model specification. | High false-negative rate; Inability to detect true biological interactions. |

| Multiple Testing | Vast number of possible SNP pairs. | ~500,000 SNPs → ~125 billion pairwise tests; Bonferroni threshold: ~4.0 x 10⁻¹³ [12]. | Highly conservative significance thresholds; Genuine interactions fail to reach significance. |

| Computational Burden | Exhaustive search of all SNP pairs; Use of iterative model-fitting algorithms (e.g., in GLMs) [12]. | Analysis of all pairs for 500k SNPs is computationally prohibitive on standard hardware. | Infeasibility of exhaustive genome-wide interaction scans. |

Protocols for Addressing Key Challenges

Protocol: Sequential Testing for Enhanced Power and Efficiency

This protocol, introduced by Frånberg et al., augments the standard interaction test with a series of simpler, computationally cheaper hypotheses to filter out non-interacting variant pairs before the final, most complex test [12].

Application: Pre-filtering of SNP pairs in a GWIS to reduce the multiple testing burden and improve power. Principle: A sequential testing procedure that tests a set of increasingly complex hypotheses (e.g., marginal effects) against a saturated alternative hypothesis representing full interaction. Only pairs passing the initial filters are subjected to the final interaction test [12].

Table 2: Reagent Solutions for Sequential Testing Analysis

| Research Reagent / Tool | Function in the Protocol |

|---|---|

| Genotype & Phenotype Data (e.g., PLINK format) | Primary input data for association testing. |

| High-Performance Computing (HPC) Cluster | Essential for the computational demands of large-scale sequential testing. |

| Statistical Software (R, Python, C++) | Implementation of the sequential testing algorithm and statistical models. |

| A Priori Estimated Number of Associated Variants | Used for multiple testing correction in one variant of the method [12]. |

Step-by-Step Methodology:

- Hypothesis Formulation: For each SNP pair (A, B), define a sequence of null hypotheses (H₁, H₂, ...). These typically represent models with only marginal effects of A, only marginal effects of B, or other simpler models excluding the full interaction [12].

- Sequential Testing: Test each null hypothesis in the sequence against the saturated alternative model (which includes the interaction term) using a likelihood-ratio test or a similar efficient test statistic.

- Filtering: If a SNP pair fails to reject any of the simpler null hypotheses in the sequence, it is filtered out from further consideration. This step drastically reduces the number of pairs that proceed to the final, most computationally expensive test.

- Final Interaction Test: Perform the full interaction test only on the subset of SNP pairs that passed all previous filters.

- Multiple Testing Correction: Apply a multiple testing correction (e.g., Bonferroni or False Discovery Rate) based on the effective number of tests performed after filtering. The method can use a pre-estimated number of associated variants or an adaptive procedure for this correction [12].

- Interpretation and Validation: Statistically significant interactions should be validated in an independent cohort. The use of a closed testing procedure ensures control of the family-wise error rate [12].

Diagram 1: Sequential Testing Workflow. This flow diagram illustrates the sequential filtering process where SNP pairs are evaluated against a series of simpler hypotheses before the final, computationally intensive interaction test.

Protocol: Model-Based Multifactor Dimensionality Reduction (MB-MDR)

MB-MDR is a semi-parametric method that combines non-parametric dimensionality reduction with parametric association testing, effectively addressing issues of scale and adjustment for covariates [15].

Application: Detecting G×G and G×E interactions for binary, continuous, and survival outcomes. Principle: To reduce the high-dimensional genotype combination space into a lower-dimensional factor (e.g., High, Low, No evidence of risk) which is then tested for association with the phenotype [15].

Step-by-Step Methodology:

- Cell Formation: For a given combination of k factors (e.g., two SNPs), represent all possible multi-locus genotypes as cells in a contingency table.

- Cell-Wise Association Testing: Within each cell, perform an association test (e.g., a χ²-test for case-control data or a t-test for a continuous trait) comparing the phenotype distribution in that cell to all other cells combined.

- Risk Labeling (Dimensionality Reduction):

- If the test is not significant, label the cell as "O" (no evidence).

- If the test is significant and the cell is associated with higher risk, label it as "H" (high-risk).

- If the test is significant and the cell is associated with lower risk, label it as "L" (low-risk).

- Association Test on Reduced Construct: Construct a new variable with levels H, L, and O. Perform an association test (e.g., comparing H vs. {L, O} and L vs. {H, O}) and use the maximum of these test statistics.

- Significance Assessment: Repeat steps 1-4 for all factor combinations. Assess the significance of the maximum test statistic for each combination using a permutation-based maxT procedure to correct for multiple testing [15].

Table 3: Reagent Solutions for MB-MDR Analysis

| Research Reagent / Tool | Function in the Protocol |

|---|---|

| Quality-Controlled Genotype Data | Input data after standard GWAS QC (MAF, HWE, call rate) [15]. |

| MB-MDR Software | The core analytical tool (e.g., C++ implementation v4.4.0). |

| Environmental Exposure Data | For G×E analysis (e.g., categorized age, sex, smoking status). |

| High-Performance Computing Cluster | For permutation-based significance testing. |

Protocol: A Mendelian Randomization Framework for Screening G×E

This novel approach, conceptualized by Chen et al., leverages the Mendelian Randomization (MR) framework to screen for gene-environment interactions using summary statistics from GWAS and GWIS, mitigating power issues caused by collinearity [5].

Application: Powerful screening for G×E interactions using existing GWAS and GWIS summary statistics. Principle: The difference between the marginal genetic effect (from a standard GWAS) and the main genetic effect (from a model adjusting for the environment, GWIS) captures the combined effect of G×E and mediation. Under independence of G and E, this difference reflects G×E. The MR framework robustly tests for deviations from the expected relationship between these two effect estimates [5].

Step-by-Step Methodology:

- Data Acquisition: Obtain summary statistics (effect sizes and standard errors) for a large number of SNPs from a large GWAS (marginal effect, α) and a GWIS that adjusted for the environmental exposure of interest (main effect, β₁).

- Estimate Causal Effect (θ): Using the MR framework (e.g., MR-Egger or inverse-variance weighted regression), estimate the causal effect θ of the main effect (β₁) on the marginal effect (α) for all SNPs. Under the null hypothesis of no G×E and no mediation, the intercept of this regression is zero.

- Identify Outliers: Statistically significant deviations from the regression line (e.g., a non-zero intercept or outlier SNPs identified by MR-PRESSO) indicate the presence of G×E or mediation [5].

- Replication: SNPs identified as outliers in the screening step should be tested for direct interaction in an independent dataset using the standard regression model:

Y = β₀ + β₁G + β₂E + β₃G×E + ε[5].

Diagram 2: Relationship between Marginal and Interaction Effects. This causal diagram illustrates how the marginal genetic effect (α) estimated in GWAS is a composite of the main effect (β₁), potential mediation (ρ), and the interaction effect (β₃).

The Scientist's Toolkit: Key Research Reagent Solutions

The table below consolidates essential computational tools and methods for conducting genetic interaction research.

Table 4: Key Research Reagents and Computational Tools for Genetic Interaction Studies

| Tool / Method | Primary Function | Key Advantage / Application |

|---|---|---|

| Sequential Testing [12] | Statistical pre-filtering of SNP pairs. | Reduces multiple testing burden and computational load before final interaction test. |

| MB-MDR Software [15] | Non-parametric detection of higher-order interactions. | Adjusts for covariates; handles various trait types; provides a robust, model-free test. |

| Empirical Fuzzy MDR (EF-MDR) [16] | Detects G×G interactions with fuzzy set theory. | Mitigates information loss from binary classification; uses empirical estimates without tuning parameters. |

| Mendelian Randomization (MR) [5] | Screens for G×E using summary statistics. | Leverages existing large GWAS; powerful screening tool to prioritize variants for direct interaction testing. |

| Hierarchical Modeling (BhGLM R package) [17] | Simultaneously fits many genetic variables. | Shrinks unimportant effects toward zero; reduces effective number of tests via Hierarchical Bonferroni Correction. |

| ReliefF / TuRF Filtering [15] [16] | Pre-analysis SNP filtering. | Selects a subset of promising SNPs for interaction analysis, reducing combinatorial explosion. |

The challenges of statistical power, multiple testing, and computational burden in genetic interaction research are significant but not insurmountable. The protocols detailed herein—sequential testing, MB-MDR, and the novel MR-based screening approach—provide a robust toolkit for researchers. By strategically combining efficient filtering, powerful dimensionality reduction, and innovative uses of summary statistics, these methods enhance our ability to detect elusive genetic interactions. Integrating these approaches into a coherent analysis strategy, framed within a contrast-testing paradigm, will be crucial for uncovering the complex genetic architecture of diseases and advancing personalized medicine.

Application Notes and Protocols for Contrast Test Approaches in Genetic Interactions Research

Within the broader thesis on advancing contrast test methodologies for genetic interaction research, a fundamental and often underestimated challenge is scale dependency. The statistical detection and biological interpretation of genetic interactions—whether synthetic lethality in cancer [18], epistasis in quantitative traits [19] [4], or gene-environment interplay [5]—are critically dependent on the chosen link function and model parameterization within a regression framework [13]. This application note details the experimental and analytical protocols necessary to navigate this dependency, ensuring robust and reproducible identification of genetic interactions for researchers and drug development professionals.

The core issue is that an interaction detected under one model specification (e.g., a multiplicative model with a log link) may vanish under another (e.g., an additive model with an identity link), and vice-versa [13]. This is not merely a statistical artifact but reflects the underlying biological scale on which genetic variants operate. Consequently, reliance on a single, default model can lead to both false positives and a significant loss of statistical power, directly impacting the prioritization of therapeutic targets [18] [12].

The following tables consolidate key quantitative findings from recent studies, highlighting how results vary with analytical approach.

Table 1: Impact of Model Specification on Genetic Interaction Discovery in Cancer Screens

| Study / Dataset | Primary Model Used | # Initial Discoveries | # Robust, Cross-Validated Interactions | Key Factor for Robustness | Citation |

|---|---|---|---|---|---|

| Pan-Cancer LoF Screens (DRIVE, DEPMAP, AVANA, SCORE) | Multiple linear regression (additive) with tissue/MSI covariates | 1530 driver-gene dependencies | 229 (14.97%) | Validation in independent, non-overlapping cell line panels; enrichment in physically interacting protein pairs. | [18] |

| Analysis of same screens with alternative parameterization | Not explicitly tested; authors note oncogene addictions were most robust signal. | - | 220 (excluding self-interactions) | Protein-protein interaction network prior knowledge improved prioritization. | [18] |

Table 2: Performance of Different Statistical Tests for Detecting Interactions

| Test / Method | Key Assumption / Parameterization | Computational Efficiency | Statistical Power Note | Scale Dependency Mitigation | Citation |

|---|---|---|---|---|---|

| Direct Test in GLM (e.g., Logistic Regression) | Tests β₃ in model: g(μ) = β₀ + β₁G + β₂E + β₃GxE | Standard | Low power due to collinearity between G and GxE terms. | Highly sensitive to choice of link function g(). | [5] [13] |

| Marginal vs. Main Effect Contrast (T_diff) | Tests H₀: α (marginal) = β₁ (main). Equivalent to direct test in same data. | High (uses summary stats) | Powerful when GWAS and GWIS estimates are comparable. | Biased by population stratification & study heterogeneity. | [5] |

| Mendelian Randomization-Based Screen | Tests for variants departing from regression line α̂ = θβ̂₁ + δ. | High (uses summary stats) | Identifies combined effect of GxE and mediation. | More robust to cross-study heterogeneity; requires valid IVs. | [5] |

| Joint Wald Test on Full Interaction Parameters | Tests all interaction parameters simultaneously in a GLM. | High (closed-form solution) | Superior power and false positive rate control vs. sequential testing. | Framework allows explicit comparison across link families. | [13] |

Detailed Experimental Protocols

Protocol 1: Identifying Robust Genetic Interactions from Loss-of-Function Screens

Objective: To distinguish context-specific false positives from robust, therapeutically relevant genetic interactions (e.g., synthetic lethality) across heterogeneous cell line panels [18].

- Data Acquisition & Harmonization:

- Obtain gene dependency scores (e.g., CERES, DEMETER2) from at least two independent large-scale screens (e.g., DepMap, AVANA, DRIVE).

- Harmonize cell line identifiers and genomic annotations (e.g., from CCLE). Integrate copy number, mutation, and tissue type data for each line.

- Definition of Discovery and Validation Sets:

- Designate one screen as the Discovery Set. Use a second, independent screen as the Validation Set.

- Critical Step: Remove all cell lines present in the Discovery Set from the Validation Set to ensure true independent validation.

- Statistical Modeling for Discovery:

- For a given driver gene D and target gene T, fit a multiple linear regression model in the Discovery Set:

Dependency_T ~ β₀ + β₁*(Tissue Type) + β₂*(MSI Status) + β₃*(D_Alteration Status) - A significant β₃ indicates a candidate genetic dependency.

- For a given driver gene D and target gene T, fit a multiple linear regression model in the Discovery Set:

- Validation and Robustness Assessment:

- Apply the estimated coefficient β₃ from the Discovery model to the cell lines in the independent Validation Set.

- Test if the association holds (p < 0.05). Only interactions reproducible in this strict split are considered robust.

- Biological Prioritization Filter:

- Filter the list of robust interactions by intersecting with protein-protein interaction networks. Robust interactions are significantly enriched among physically interacting protein pairs [18].

Protocol 2: A Scale-Agnostic Testing Protocol for Genome-Wide Interaction Scans

Objective: To test for pairwise genetic interactions in case-control or quantitative trait studies while minimizing bias from arbitrary link function choice [13].

- Model Specification and Parameterization:

- Use a Generalized Linear Model (GLM) framework. For a variant pair (G1, G2), define a full parameterization that includes all main and interaction terms (e.g., a saturated model for two biallelic SNPs).

- Do not assume the absence of main effects.

- Implementing the Joint Wald Test:

- Estimate model parameters via maximum likelihood.

- Construct the variance-covariance matrix of the interaction term parameters.

- Compute the Wald test statistic for the joint hypothesis that all interaction parameters are zero: W = β̂int' * Cov(β̂int)⁻¹ * β̂_int. This statistic follows a χ² distribution.

- Scale Sensitivity Analysis (The Contrast Test Approach):

- Repeat the Joint Wald Test across a family of link functions (e.g., logit, probit, log-complement, identity).

- Protocol Variant for Case-Control Data: Apply the LD-contrast test framework [12], which compares linkage disequilibrium patterns between cases and controls and is less sensitive to specific linear model parameterizations.

- Interpretation and Reporting:

- An interaction signal that persists across multiple, biologically plausible link functions is considered more reliable.

- Report results and p-values for all tested link functions, not just a single one.

Protocol 3: Detecting GxE via Mendelian Randomization Contrast

Objective: To leverage summary statistics from large GWAS and genome-wide interaction studies (GWIS) to screen for gene-environment interactions with high power [5].

- Data Input: Obtain summary statistics (beta estimates and standard errors) for a trait from: a) a large standard GWAS (marginal effect, α̂), and b) a GWIS with an environmental exposure E (main effect, β̂₁).

- Estimation of the Causal Coefficient (θ):

- Use Mendelian Randomization (MR) methods (e.g., Inverse-Variance Weighted) with multiple genetic instruments to estimate the slope θ in the regression: α̂ = θ β̂₁ + δ.

- Genetic variants for MR should be selected based on association with the trait in the GWIS (main effect).

- Screening for Departures (Contrast):

- For each variant j, calculate the residual: δ̂j = α̂j - θ β̂₁j.

- The variance of δ̂j is: Var(α̂j) + θ² Var(β̂₁j) - 2θ Cov(α̂j, β̂₁j). Approximate covariance if not available.

- Statistical Testing:

- Compute the test statistic Tdiffj = δ̂j² / Var(δ̂j) for each variant.

- Variants with significant T_diff are candidates for having either a GxE effect or a mediation effect on the trait through the environment.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Resources for Genetic Interaction Research

| Item | Function / Description | Example Source / Reference |

|---|---|---|

| CRISPR-Cas9 Knockout Library | Enables genome-wide loss-of-function screens to identify gene dependencies. | Brunello or Avana libraries used in DepMap/Avana screens [18]. |

| shRNA Library | Alternative to CRISPR for RNAi-mediated knockdown screens. | DRIVE project library [18]. |

| Annotated Cell Line Panel | A characterized set of cancer cell lines with genomic, transcriptomic, and dependency data. | Cancer Cell Line Encyclopedia (CCLE); DepMap consortium [18] [20]. |

| Protein-Protein Interaction Network | Prior knowledge network for validating and prioritizing genetic interactions. | BioGRID, STRING; Used to filter robust synthetic lethal pairs [18]. |

| Genetic Interaction Database | Repository of known synthetic lethal or epistatic interactions for validation. | SynLethDB [20], BioGRID Genetic Interactions. |

| Generalized Linear Model Software | Software capable of fitting GLMs with different link functions for scale testing. | R (glm), Python (statsmodels), PLINK2 [13]. |

| Mendelian Randomization Software | Tools for performing MR analysis on summary statistics. | TwoSampleMR (R), MR-Base platform. |

| GO Term Annotations | Gene Ontology terms used as features for machine learning prediction of interactions. | Gene Ontology Consortium; Used in graph neural network models [20]. |

Visualizing Workflows and Conceptual Frameworks

Diagram 1: Scale Dependency in Genetic Interaction Testing

Diagram 2: A Unified Thesis Framework for Robust Interaction Discovery

The rigorous detection of genetic interactions mandates a conscious engagement with the problem of scale dependency. As detailed in these protocols, moving beyond a single-model paradigm to embrace contrast approaches—whether across experimental contexts [18], statistical link functions [13], or summary statistics from different study designs [5]—is paramount. Integrating these robust signals with prior biological knowledge via network-based models [18] [20] provides a powerful, multi-faceted strategy. This disciplined, scale-aware methodology is essential for transforming high-throughput genetic data into reliable therapeutic targets and a deeper understanding of complex biological systems.

The identification of genetic interactions, such as epistasis, is a cornerstone of understanding the complex genetic architecture underlying human diseases and traits. However, establishing the biological plausibility of these statistical findings is a critical step in translating them into meaningful mechanistic insights. Research utilizing model organisms provides an indispensable platform for this functional validation, allowing researchers to test hypotheses generated from human genetic studies in a controlled experimental setting. The long-standing reliance on a handful of established "supermodel organisms"—such as mice, fruit flies, and nematodes—has yielded fundamental discoveries, but this narrow focus also presents limitations for translation to human biology [21] [22]. A paradigm shift is underway, driven by comparative genomics, which leverages increasingly affordable DNA sequencing to identify novel, emerging model organisms with specific biological advantages for studying particular human pathways and diseases [21]. This application note details how these diverse organisms, coupled with sophisticated genetic screening protocols, provide the critical evidence needed to move from statistical genetic interaction to validated biological pathway.

Emerging Model Organisms for Targeted Human Biology

The National Institutes of Health (NIH) Comparative Genomics Resource (CGR) project is helping to harness the power of comparative genomics by creating an ecosystem that facilitates reliable analyses for all eukaryotic organisms [21]. This effort enables the scientific community to move beyond traditional models by identifying organisms with unique biological traits that make them particularly suited for studying specific human physiological processes or disease states. The following table summarizes key emerging model organisms and their research applications.

Table 1: Emerging Model Organisms and Their Applications in Human Health Research

| Organism | Key Research Application | Specific Human Pathway/Process | Experimental Advantage |

|---|---|---|---|

| Pig (Sus scrofa domesticus) | Xenotransplantation [21] | Immune rejection, Organ function | Anatomical and physiological similarity to humans; CRISPR used to modify rejection genes [21] |

| Syrian Golden Hamster (Mesocricetus auratus) | Respiratory virus pathogenesis (e.g., SARS-CoV-2) [21] | Viral entry, Immune response, Cytokine signaling | ACE2 protein similarity to humans; ideal for studying transmission and lung pathology [21] |

| Thirteen-Lined Ground Squirrel (Ictidomys tridecemlineatus) | Hibernation & Metabolomics [21] | Metabolic switching (glucose to lipid), Ischemia-reperfusion injury, Bone maintenance | Ability to survive extreme hypothermia and torpor; resists neurological damage and maintains bone during inactivity [21] |

| African Turquoise Killifish (Nothobranchius furzeri) | Aging & Longevity [21] | Cellular senescence, Proteostasis, Insulin signaling | One of the shortest lifespans among vertebrates (4-6 months) [21] |

| Bats (Chiroptera order) | Immunovirology & Cancer Biology [21] | Innate immune response, Inflammation, Tumor suppression | Tolerant of viruses pathogenic to humans; exhibits reduced inflammation and low cancer incidence [21] |

| Dog (Canis familiaris) | Oncology [21] | Sarcoma, Osteosarcoma, Bladder cancer | Spontaneously developing cancers; different breed predispositions offer genetic insights [21] |

The selection of an appropriate model organism is increasingly being guided by data-driven approaches that analyze evolutionary relationships and protein conservation across the eukaryotic tree of life. This method helps pair specific biological questions with the organisms best suited to answer them, thereby expanding the potential for new biomedical discoveries beyond the limitations of traditional supermodels [22].

Experimental Protocols for Genetic Interaction Mapping

A powerful method for functionally characterizing genetic networks in model organisms is the Epistatic MiniArray Profile (E-MAP). This systematic approach measures genetic interactions quantitatively, revealing a spectrum of effects beyond simple synthetic lethality [23]. The following protocol describes its implementation in yeast, a foundational model system.

Protocol: Epistatic MiniArray Profile (E-MAP) in Yeast

I. Purpose and Principle The E-MAP protocol is designed to systematically measure quantitative genetic interactions between pairs of mutations with respect to organismal growth rate. A genetic interaction (ε) is defined as the difference between the observed double-mutant phenotype (PAB,observed) and the expected phenotype if no interaction exists (PAB,expected) [23]. This approach allows for the unbiased characterization of gene function and the construction of detailed genetic interaction maps.

II. Materials and Reagents Table 2: Key Research Reagents for E-MAP Analysis

| Reagent/Equipment | Function/Description |

|---|---|

| Yeast Deletion Mutant Collection | A library of defined gene deletion strains, typically in S. cerevisiae or S. pombe. |

| Automatic Pin Tool (Robotic Arrayer) | For high-density replica plating of yeast strains to create double mutants. |

| Solid Growth Media Plates (Agar) | YPD or synthetic media supporting yeast growth. |

| Flatbed Scanner | For digitizing colony growth on plates. |

| Image Analysis Software | For quantifying colony area as a proxy for growth fitness. |

III. Step-by-Step Procedure

- Selection of Mutations: Rationally select a set of 400-800 genes likely to be functionally related (e.g., based on protein localization, membership in a complex, or shared molecular function) to increase the frequency of detectable interactions [23].

- Double Mutant Construction: Use robotic pinning to mate haploid yeast mutants from the query and array sets. Sporulate the resulting diploids and select for haploid double mutants.

- Phenotypic Growth Measurement: Plate the double mutants onto solid media in a high-density array. Incubate until colonies form. Scan the plates and use image analysis software to measure the area of each colony as a quantitative measure of fitness.

- Data Processing and Normalization:

- Calculate the growth phenotype (P) for each single and double mutant.

- Compute the expected double-mutant phenotype (P_AB,expected) empirically from the bulk of the data, as it represents the typical combined effect of two single mutations [23].

- Calculate the genetic interaction score (εAB) for each pair using the formula: εAB = PAB,observed - PAB,expected [23].

- A negative score indicates a synthetic sick/lethal interaction, while a positive score indicates an alleviating or suppressive interaction.

IV. Applications and Data Interpretation The resulting quantitative genetic interaction scores are analyzed as a matrix. Genes with similar patterns of genetic interactions (interaction profiles) are likely to be functionally related [23]. This data can be used to identify novel members of a pathway, characterize the function of unannotated genes, and understand the functional organization of complex biological processes.

Diagram 1: E-MAP workflow for yeast genetic interaction mapping.

From Statistical Prediction to Biological Validation: A Contrast Test Framework

The journey from a predicted genetic interaction in human GWAS to a validated biological mechanism requires a multi-stage, contrastive testing framework. This process integrates computational predictions from human data with rigorous experimental testing in model systems.

Computational Detection in Human Genetic Data

Traditional genome-wide association studies (GWAS) that analyze variants independently often miss non-linear epistatic interactions crucial for disease susceptibility [24]. Advanced machine learning methods, particularly visible neural networks (VNNs), are now being deployed to address this challenge. VNNs embed prior biological knowledge (e.g., gene-pathway annotations) directly into their architecture, creating sparse, interpretable models that can predict genetic risk by leveraging non-linear combinations of inputs [10]. Post-hoc interpretation methods, such as Neural Interaction Detection (NID) and PathExplain, can then be applied to these trained networks to extract candidate epistatic pairs of SNPs or genes [10]. Frameworks like GenoGraph further exemplify this approach, using graph-based contrastive learning to model complex variant relationships in high-dimensional data and identify key interacting risk variants, such as those associated with breast cancer [24].

Diagram 2: Contrast test framework from prediction to validation.

Functional Validation in Model Organisms

Candidate interactions identified computationally must be tested for biological plausibility in an in vivo setting.

- Candidate Selection and Pathway Analysis: Prioritize candidate genetic interactions based on statistical strength and biological relevance. Place the interacting genes within known pathways (e.g., KEGG, Reactome) to formulate testable hypotheses about the underlying mechanism.

- Model Organism Selection: Choose the most appropriate model organism based on the biological question, leveraging the advantages outlined in Table 1. For example, use killifish to test interactions in aging pathways or hamsters for interactions affecting viral immune responses [21].

- Experimental Perturbation and Phenotyping: Introduce the homologous genetic perturbations (e.g., using CRISPR/Cas9) into the model organism to create single and double mutants. Subsequently, perform quantitative phenotyping relevant to the human disease. This could range from high-throughput growth-based assays (as in E-MAP) to specialized physiological measurements of hibernation, metabolism, or immune function [21] [23].

- Contrastive Analysis and Interpretation: Contrast the observed double-mutant phenotype against the expected additive effect of the two single mutations. A statistically significant deviation confirms a genetic interaction in the model system, providing strong experimental evidence for the biological plausibility of the initial computational prediction.

Establishing biological plausibility for statistically inferred genetic interactions is a critical, non-negotiable step in genetic research. The integrated framework presented here—which leverages data-driven model organism selection, high-precision experimental protocols like E-MAP, and a contrastive testing philosophy—provides a robust pathway for validation. By moving from human genomics to functional testing in evolutionarily informed and physiologically relevant models, researchers can transform correlative genetic findings into causative mechanistic understanding, ultimately accelerating the development of novel therapeutic strategies.

A Methodological Toolkit: From Statistical Tests to Deep Learning Frameworks

Generalized Linear Models (GLMs) and the Joint Testing of Interaction Parameters

The identification of interaction effects—where the effect of one variable depends on the level of another—is fundamental to understanding complex biological systems. In genetic research, specifically, gene-gene (G×G) and gene-environment (G×E) interactions contribute significantly to the etiology of complex traits and diseases, potentially explaining elements of "missing heritability" not accounted for by marginal genetic effects [25] [26]. Generalized Linear Models (GLMs) provide a unified statistical framework for detecting these interactions across diverse data types, including continuous, binary, and count phenotypes [13].

A significant methodological challenge in large-scale genetic studies is the computational burden associated with testing all possible interaction pairs. For a genome-wide association study (GWAS) with 500,000 SNPs, a comprehensive two-locus scan requires approximately 125 billion tests [25]. Joint testing of interaction parameters, which involves simultaneously testing the complete set of interaction parameters in a model, has emerged as a powerful strategy. This approach offers superior statistical power and better control of false positive rates compared to marginal testing strategies, while efficient computational algorithms now make it feasible for genome-wide analyses [13].

Theoretical Foundations

GLM Framework for Interaction Testing

Within the GLM framework, the relationship between predictor variables and the expected value of a response variable is defined through a link function. For an individual i, with phenotype y_i and predictor variables x_i, this relationship is expressed as:

[g(E[yi | Xi]) = \psi(x_i)\beta]

Here, (g(·)) represents the link function (e.g., identity for linear regression, logit for logistic regression), (\psi(x_i)) is the parameterization or encoding of predictor variables, and (\beta) is the vector of parameters including main effects and interaction terms [13]. The interaction effect is typically represented by including a product term between the interacting variables in the model matrix.

When testing for interactions between two genetic variants, the model can be specified to test the null hypothesis that the interaction parameter (\beta_{12} = 0):

[ H0: g(\mui) = \beta0 + \beta1 SNP{1i} + \beta2 SNP{2i} ] [ H1: g(\mui) = \beta0 + \beta1 SNP{1i} + \beta2 SNP{2i} + \beta{12} SNP{1i} \times SNP_{2i} ]

where (SNP{1i}) and (SNP{2i}) represent genotypes at two different loci for individual i [25]. The statistical evidence for interaction is typically assessed using a likelihood ratio test, comparing the deviance between the null model (without interaction) and the alternative model (with interaction) [25].

Advantages of Joint Parameter Testing

Simulation studies have demonstrated that jointly testing the full set of interaction parameters provides superior power and better control of false positive rates compared to alternative approaches [13]. This comprehensive testing strategy is particularly valuable because:

- It maintains the natural hierarchy between main effects and interactions

- Reduces the risk of model misspecification by including all relevant terms simultaneously

- Provides a unified framework for testing different types of interactions (G×G, G×E)

- Ensures comparability of results across different studies, facilitating meta-analysis

Table 1: Comparison of Interaction Testing Approaches

| Testing Approach | Statistical Power | Computational Efficiency | Implementation Complexity | Best Use Cases |

|---|---|---|---|---|

| Joint Testing | High | Moderate | Moderate | Hypothesis-driven analysis of specific pathways |

| Marginal Testing | Lower | High | Low | Initial screening of large datasets |

| Two-Stage Testing | Moderate | High | Moderate | Genome-wide interaction scans |

Computational Considerations and Efficient Testing Strategies

Two-Stage Screening for Genome-Wide Analyses

To address the computational challenges of genome-wide interaction testing, two-stage interaction analysis strategies have been developed. These approaches maintain much of the statistical power of a full interaction scan while dramatically reducing computational requirements [25].

In the first stage, all SNP pairs are screened using a computationally efficient test. For binary outcomes, this can be implemented through PLINK's "fast-epistasis" procedure, which compares allelic odds ratios between cases and controls using a closed-form test statistic [25]. For quantitative traits, one approach involves dichotomizing the phenotype at the median to create quasi-case-control groups, though this results in some loss of information [25].

In the second stage, only those SNP pairs meeting a pre-specified significance threshold ((\alpha_{FAST})) from the first stage are carried forward for more rigorous testing in the full GLM framework. This two-stage strategy typically recovers >95% of the power of a full two-locus scan while reducing computation time by several orders of magnitude [25].

Efficient Wald Tests for GLMs

Recent methodological developments have introduced computationally efficient Wald tests for testing interaction parameters within the complete family of GLMs. These tests can be applied to case-control traits, quantitative traits, and any trait modeled by a member of the exponential family [13]. The advantages of this approach include:

- Flexibility to accommodate any combination of parameterization and link function

- Computational efficiency sufficient for modern large-scale datasets

- Applicability to meta-analysis through combination of results across studies

- Generalizability across different study designs and phenotype types

Experimental Protocols

Protocol 1: Joint Testing of G×G Interactions in GWAS

This protocol details the steps for conducting genome-wide testing of gene-gene interactions for a quantitative trait using a two-stage approach to balance statistical power and computational efficiency.

Materials and Software Requirements

Table 2: Essential Research Reagents and Computational Tools

| Item | Function | Implementation Examples |

|---|---|---|

| Genotype Data | Genetic variants in standard format | PLINK binary files (.bed, .bim, .fam) |

| Phenotype Data | Continuous or binary traits | CSV or TSV files with sample IDs |

| Covariates | Adjustment for confounding | Age, sex, principal components |

| Statistical Software | Model fitting and testing | R, PLINK, Python, specialized GWAS tools |

| High-Performance Computing | Parallel processing of tests | Computing cluster with job scheduler |

Procedure

Data Preparation and Quality Control

- Filter SNPs based on quality control metrics: call rate >95%, minor allele frequency >1%, Hardy-Weinberg equilibrium p-value >1×10⁻⁶

- Perform population stratification correction using principal components analysis

- Check phenotype distribution and consider transformations if necessary

- Remove related individuals (kinship coefficient >0.044) to ensure sample independence

First-Stage Screening (Rapid Testing)

- For each SNP pair, perform efficient screening using a closed-form test statistic

- For quantitative traits, implement the following rapid test after dichotomizing at the median:

- For binary traits, use PLINK's fast-epistasis option:

- Retain SNP pairs meeting pre-specified significance threshold (typically (\alpha_{FAST} = 0.001))

Second-Stage Testing (Comprehensive GLM)

- For each SNP pair passing the first stage, fit the full GLM with interaction term:

- For binary traits, use logistic regression with appropriate adjustments

- Correct for multiple testing using Bonferroni, FDR, or permutation-based approaches

Results Interpretation and Validation

- Annotate significant interactions with gene information and functional annotations

- Check for potential confounding due to population stratification

- Visualize significant interactions using interaction plots or effect size diagrams

- Replicate findings in independent cohorts when possible

Figure 1: Two-stage workflow for genome-wide interaction testing that balances statistical power with computational efficiency.

Protocol 2: Testing G×E Interactions with Multiple Environmental Factors

This protocol describes the analysis of gene-environment interactions with an emphasis on joint testing of interaction parameters and proper handling of multiple environmental variables.

Procedure

Model Specification

- Specify the full GLM including main effects and interaction terms: [ g(E[Yi]) = \beta0 + \betaG Gi + \betaE Ei + \beta{G×E} Gi × Ei + \betaC Ci ] where (Gi) is the genetic variant, (Ei) is the environmental factor, and (Ci) represents covariates

- For multiple environmental factors, include all relevant interaction terms in the joint test

Joint Testing Procedure

- Fit the full model with all interaction terms included

- Fit a reduced model excluding the interaction terms of interest

- Perform likelihood ratio test to compare models:

Handling of Categorical and Continuous Moderators

- For categorical environmental factors (e.g., treatment vs. control), use dummy coding with appropriate reference groups

- For continuous environmental factors, consider checking for linearity assumptions

- When the moderator is continuous, visualize interactions using the "pick-a-point" approach by plotting regression lines at representative values (e.g., mean, ±1 SD) [27]

Visualization and Interpretation

- Create interaction plots to visualize the nature of significant interactions:

- Calculate simple slopes for significant interactions to facilitate interpretation

- Report effect sizes and confidence intervals in addition to p-values

Applications in Genetic Research

Variance Quantitative Trait Loci (vQTL) Detection

The detection of variance quantitative trait loci (vQTL) represents a powerful alternative approach for discovering G×E and G×G interactions without directly testing all possible interaction pairs. vQTLs are loci where phenotypic variance differs across genotype groups, which can occur when important interactions are omitted from the regression model [28].

Both parametric and non-parametric methods are available for vQTL detection:

- Parametric tests include the Brown-Forsythe (BF) test, deviation regression model (DRM), and double generalized linear model (DGLM)

- Non-parametric tests include the Kruskal-Wallis (KW) test and quantile integral linear model (QUAIL)

Simulation studies indicate that the deviation regression model (DRM) and Kruskal-Wallis test (KW) are the most recommended parametric and non-parametric tests, respectively, considering both false positive rates and computational efficiency [28]. Identifying vQTLs before direct interaction analysis can substantially reduce the number of tests and the associated multiple testing penalty.

Case Study: Interaction in Cardiovascular Disease

In a genome-wide interaction analysis of Lp(a) plasma levels, a joint testing approach identified a significant interaction (p = 2.42×10⁻⁹) between two tag variants in the LPA locus [13]. This interaction was successfully replicated in an independent cohort (p = 6.97×10⁻⁷), demonstrating the utility of joint testing methods for identifying robust genetic interactions.

The analysis workflow included:

- Quality control of genotype and phenotype data

- Model specification with appropriate parameterization of genetic variants

- Joint testing of interaction parameters using efficient Wald tests

- Replication in independent cohorts

- Meta-analysis combining results from multiple studies

This case study highlights how joint testing of interaction parameters can reveal biologically meaningful interactions that might be missed by marginal testing approaches.

Advanced Methodological Considerations

Meta-Analysis of Interaction Effects

Combining interaction results across multiple studies requires special methodological considerations. The meta-analysis of interaction effects can be challenging due to differences in study design, environmental exposures, and genetic backgrounds across cohorts [13]. Nevertheless, methods have been developed to effectively combine interaction results:

- Use of z-score-based meta-analysis for combining test statistics across studies

- Inverse-variance weighted meta-analysis for combining effect size estimates

- Accounting for heterogeneity between studies using random-effects models

- Stratified analyses to investigate sources of heterogeneity in interaction effects

Robustness to Link Function Misspecification

A critical consideration in interaction testing within GLMs is the potential for link function misspecification. The choice of link function determines the parameter subspace belonging to the null model, and misspecification can inflate error rates in a way that cannot be resolved by replication in separate cohorts [13]. To address this issue:

- Consider testing interactions using a family of link functions rather than a single link function

- Use goodness-of-link tests to assess the appropriateness of the chosen link function

- Be aware that previously suggested goodness-of-link tests may not be appropriate for joint testing of interaction parameters

Table 3: Comparison of vQTL Detection Methods

| Method | Type | Best For | Limitations | Computational Efficiency |

|---|---|---|---|---|

| Brown-Forsythe (BF) | Parametric | Normally distributed traits | Severe FPR inflation with MAF <0.2 | High |

| Deviation Regression (DRM) | Parametric | Continuous predictors | Less robust to non-normal traits | High |

| Double GLM (DGLM) | Parametric | Normally distributed traits | Invalid for non-normal traits | Moderate |

| Kruskal-Wallis (KW) | Non-parametric | Robustness to outliers | Less powerful for normal traits | High |

| QUAIL | Non-parametric | Non-normal traits, covariate adjustment | Lower power, computationally intensive | Low |

Figure 2: Comprehensive workflow for interaction analysis in genetic studies, emphasizing joint testing and replication.

Joint testing of interaction parameters within the GLM framework provides a powerful and flexible approach for detecting gene-gene and gene-environment interactions in genetic research. This methodology offers superior statistical power compared to marginal testing approaches while efficient computational strategies make it feasible for genome-wide applications. The two-stage testing approach effectively balances comprehensive interaction assessment with computational practicality, enabling researchers to detect meaningful biological interactions that contribute to complex traits and diseases.

As genetic datasets continue to grow in size and complexity, joint testing methods will play an increasingly important role in unraveling the intricate networks of genetic and environmental factors underlying human health and disease. The integration of these methods with functional validation and biological pathway analysis will further enhance our understanding of the genetic architecture of complex traits.