Computational Frameworks for Complex Disease Stratification: From Multi-Omics Integration to Clinical Translation

Complex diseases like cancer, Alzheimer's, and cardiovascular disorders demand precision medicine approaches that move beyond broad classifications.

Computational Frameworks for Complex Disease Stratification: From Multi-Omics Integration to Clinical Translation

Abstract

Complex diseases like cancer, Alzheimer's, and cardiovascular disorders demand precision medicine approaches that move beyond broad classifications. This article explores the computational frameworks revolutionizing disease stratification by integrating multi-omics data, clinical records, and artificial intelligence. We examine foundational concepts in systems biology, detail methodological approaches for data integration and patient clustering, address critical troubleshooting and optimization challenges, and evaluate validation strategies ensuring clinical relevance. Aimed at researchers, scientists, and drug development professionals, this review synthesizes current capabilities and future directions for deploying computational stratification in biomedical research and clinical practice, ultimately enabling more precise patient subtyping, biomarker discovery, and personalized therapeutic development.

The Systems Medicine Foundation: Principles and Drivers of Computational Disease Stratification

The field of biomedical research has undergone a fundamental transformation in its approach to understanding human diseases, evolving from a reductionist focus on single biomarkers to a holistic paradigm of multi-omics integration. This evolution represents a critical response to the inherent complexity of biological systems, where diseases emerge from dynamic interactions across multiple molecular layers rather than isolated alterations in single molecules. Traditional single-omics approaches, while valuable for identifying individual molecular changes, have proven insufficient for capturing the intricate networks and pathways that drive disease pathogenesis and progression. The limitations are particularly evident in complex diseases such as cancer, neurodegenerative disorders, and autoimmune conditions, where substantial heterogeneity exists both between patients and within disease subtypes [1] [2].

The emergence of high-throughput technologies has enabled the comprehensive profiling of biological systems at multiple levels, including genomics, transcriptomics, proteomics, metabolomics, and epigenomics. This technological revolution has generated unprecedented volumes of data, creating both opportunities and challenges for biomedical research. While each omics layer provides valuable insights, it is through their integration that researchers can construct a more complete picture of disease mechanisms. Multi-omics integration allows for the identification of novel biomarkers and molecular signatures that would remain undetectable through single-omics analyses alone, enabling more accurate disease classification, prognosis prediction, and therapeutic targeting [1] [3].

The transition to multi-omics strategies represents more than just a technical advancement; it signifies a conceptual shift in how we perceive and investigate disease biology. By simultaneously analyzing multiple molecular dimensions, researchers can move beyond correlation to establish causal relationships across biological layers, identify key regulatory nodes in disease networks, and unravel the complex interplay between genetic predisposition, environmental influences, and disease manifestations. This integrated approach is particularly valuable for addressing the challenges of disease heterogeneity, as it enables the stratification of patient populations into distinct molecular subtypes with potential implications for personalized treatment strategies [4] [5].

The Multi-Omics Landscape: Technologies and Data Types

The multi-omics framework encompasses a diverse array of technologies that collectively enable comprehensive molecular profiling. Each omics layer interrogates a distinct aspect of biological systems, providing complementary information that, when integrated, offers a multidimensional perspective on disease mechanisms. Genomics primarily investigates alterations at the DNA level, leveraging advanced sequencing technologies such as whole exome sequencing (WES) and whole genome sequencing (WGS) to identify copy number variations (CNVs), genetic mutations, and single nucleotide polymorphisms (SNPs). Large-scale sequencing efforts, exemplified by projects like MSK-IMPACT, have revealed that approximately 37% of tumors harbor actionable alterations, highlighting the clinical potential of genomic biomarkers [1].

Transcriptomics methods explore RNA expression using probe-based microarrays and next-generation RNA sequencing, encompassing the study of mRNAs, long noncoding RNAs (lncRNAs), miRNAs, and small noncoding RNAs (snRNAs). The high sensitivity and cost-effectiveness of RNA sequencing have made transcriptomics a dominant component of multi-omics research. Clinically validated gene-expression signatures such as Oncotype DX (21-gene, TAILORx trial) and MammaPrint (70-gene, MINDACT trial) have demonstrated the utility of transcriptomic biomarkers in tailoring adjuvant chemotherapy decisions in patients with breast cancer [1].

Proteomics investigates protein abundance, modifications, and interactions using high-throughput methods including reverse-phase protein arrays, liquid chromatography–mass spectrometry (LC–MS), and mass spectrometry (MS). Post-translational modifications such as phosphorylation, acetylation, and ubiquitination represent critical regulatory mechanisms and therapeutic targets. Studies by the Clinical Proteomic Tumor Analysis Consortium (CPTAC) of ovarian and breast cancers showed that proteomics can be used to identify functional subtypes and reveal potential druggable vulnerabilities missed by genomics alone, directly informing the discovery of protein-based biomarkers for predicting therapeutic responses [1].

Metabolomics examines cellular metabolites, including small molecules, carbohydrates, peptides, lipids, and nucleosides. Techniques like MS, LC–MS, and gas chromatography–mass spectrometry enable comprehensive metabolic profiling. Classic examples include IDH1/2-mutant gliomas, where the oncometabolite 2-hydroxyglutarate (2-HG) functions as both a diagnostic and a mechanistic biomarker. More recently, a 10-metabolite plasma signature developed in gastric cancer patients demonstrated superior diagnostic accuracy compared with conventional tumor markers [1].

Epigenomics investigates DNA and histone modifications, including DNA methylation and histone acetylation. Whole genome bisulfite sequencing (WGBS) and ChIP-seq enable comprehensive epigenetic profiling. A classic clinical biomarker of glioblastoma is MGMT promoter methylation, which is a predictor of benefit from temozolomide chemotherapy. Additionally, DNA methylation–based multi-cancer early detection assays (e.g., Galleri test) are under clinical evaluation [1].

Table 1: Core Omics Technologies and Their Applications in Disease Research

| Omics Layer | Key Technologies | Molecular Elements Analyzed | Representative Clinical Applications |

|---|---|---|---|

| Genomics | WGS, WES, MSK-IMPACT | SNPs, CNVs, mutations | Tumor mutational burden for immunotherapy response [1] |

| Transcriptomics | RNA-seq, microarrays | mRNA, lncRNA, miRNA | Oncotype DX for breast cancer chemotherapy decisions [1] |

| Proteomics | LC-MS, RPPA | Proteins, PTMs | CPTAC subtypes for ovarian and breast cancers [1] |

| Metabolomics | LC-MS, GC-MS | Metabolites, lipids | 2-HG for IDH-mutant glioma diagnosis [1] |

| Epigenomics | WGBS, ChIP-seq | DNA methylation, histone modifications | MGMT promoter methylation for temozolomide response [1] |

Recent technological advances have introduced single-cell multi-omics approaches and spatial multi-omics technologies, providing unprecedented resolution in characterizing cellular states and activities within their tissue context. These technologies are expanding the scope of biomarker discovery and deepening our understanding of tumor heterogeneity and microenvironment interactions, which are essential for personalized therapeutic strategies in cancer and other complex diseases [1].

Computational Frameworks for Multi-Omics Integration

The integration of multi-omics data presents significant computational challenges due to the high dimensionality, heterogeneity, and complexity of the datasets. To address these challenges, researchers have developed various integration strategies that can be broadly categorized into three approaches: early integration, intermediate integration, and late integration. Early integration involves combining data from different omics levels at the beginning of the analysis pipeline. This approach can help identify correlations and relationships between different omics layers but may lead to information loss and biases. Intermediate integration involves integrating data from different omics levels at the feature selection, feature extraction, or model development stages, allowing for more flexibility and control over the integration process. Late integration, also known as "vertical integration," involves the analysis of each omics dataset separately, with results combined at the final stage of the analysis pipeline. This approach helps preserve the unique characteristics of each omics dataset but may lead to difficulties in identifying relationships between different omics layers [3].

Machine learning and deep learning approaches have emerged as powerful tools for multi-omics integration, enabling the identification of complex patterns and relationships that may not be apparent through traditional statistical methods. For example, an AI-driven multi-omics framework applied to schizophrenia research integrated plasma proteomics, post-translational modifications (PTMs), and metabolomics data using 17 different machine learning models. The study found that multi-omics integration significantly enhanced classification performance, reaching a maximum AUC of 0.9727 using LightGBMXT, compared to 0.9636 with CNNBiLSTM for proteomics alone. The integration of multiple omics layers provided superior performance in distinguishing schizophrenia patients from healthy individuals, highlighting the value of comprehensive molecular profiling [5].

Network-based approaches offer another powerful framework for multi-omics integration, providing a holistic view of relationships among biological components in health and disease. These methods enable the identification of key molecular interactions and biomarkers that drive disease processes. For instance, in a multi-omics study of schizophrenia, protein interaction networks implicated coagulation factors F2, F10, and PLG, as well as complement regulators CFI and C9, as central molecular hubs. The clustering of these molecules highlighted a potential axis linking immune activation, blood coagulation, and tissue homeostasis, biological domains increasingly recognized in psychiatric disorders [5].

The DIABLO (Data Integration Analysis for Biomarker discovery using Latent variable approaches for Omics studies) framework represents another sophisticated approach for integrating multiple omics datasets. This method was successfully applied in a dynamic study of influenza progression in mice, where it integrated lung transcriptome, metabolome, and serum metabolome data across multiple time points. The analysis identified several novel biomarkers associated with disease progression, including Ccl8, Pdcd1, Gzmk, kynurenine, L-glutamine, and adipoyl-carnitine, and enabled the development of a serum-based influenza disease progression scoring system [6].

Table 2: Multi-Omics Integration Strategies and Their Applications

| Integration Strategy | Key Features | Advantages | Limitations | Representative Applications |

|---|---|---|---|---|

| Early Integration | Data combined at raw or pre-processed level | Identifies cross-omics correlations | Susceptible to noise and batch effects; "curse of dimensionality" | DeepMO for breast cancer subtyping [3] |

| Intermediate Integration | Integration during feature selection/ extraction | Flexible; balances shared and specific signals | Requires careful tuning of integration parameters | DIABLO for influenza biomarker discovery [6] |

| Late Integration | Separate analysis followed by result combination | Preserves omics-specific characteristics | May miss cross-omics relationships | SKI-Cox for glioblastoma prognosis [3] |

| Network-Based Integration | Models molecular interactions as networks | Holistic view of biological systems | Complex to implement and interpret | Protein interaction networks in schizophrenia [5] |

| Automated Machine Learning | AI-driven feature selection and model optimization | Handles high dimensionality efficiently | Limited model interpretability without additional tools | AutoML for schizophrenia risk stratification [5] |

Genetic programming has emerged as an innovative computational approach for optimizing multi-omics integration. In a breast cancer survival analysis study, researchers employed genetic programming to evolve optimal combinations of molecular features from genomics, transcriptomics, and epigenomics data. The proposed framework consisted of three key components: data preprocessing, adaptive integration and feature selection via genetic programming, and model development. The experimental results indicated that the integrated multi-omics approach yielded a concordance index (C-index) of 78.31 during 5-fold cross-validation on the training set and 67.94 on the test set, demonstrating the potential of adaptive multi-omics integration in improving breast cancer survival analysis [3].

Application Notes: Success Stories in Disease Stratification

Inflammatory Bowel Disease Subtyping

Inflammatory bowel disease (IBD), comprising Crohn's disease (CD) and ulcerative colitis (UC), represents a complex condition with diverse manifestations that have historically challenged precise classification and treatment. A multi-omics approach applied to the SPARC IBD cohort demonstrated the power of integrated analysis for biomarker discovery and patient stratification. Researchers analyzed genomics, transcriptomics from gut biopsy samples, and proteomics from blood plasma from hundreds of patients. They trained a machine learning model that successfully classified UC versus CD samples based on multi-omics signatures. The most predictive features of the model included both known and novel omics signatures for IBD, potentially serving as diagnostic biomarkers. Patient subgroup analysis in each indication uncovered omics features associated with disease severity in UC patients and with tissue inflammation in CD patients. This culminated with the observation of two CD subpopulations characterized by distinct inflammation profiles, offering promising avenues for the application of precision medicine strategies [4].

Breast Cancer Survival Prediction

Breast cancer remains a major global health issue, requiring novel strategies for prognostic evaluation and therapeutic decision-making. A comprehensive multi-omics study leveraged data from The Cancer Genome Atlas to obtain deeper insights into breast cancer biology by integrating genomics, transcriptomics, and epigenomics. The researchers employed genetic programming to optimize the integration and feature selection process within the multi-omics dataset. The framework consisted of three key components: data preprocessing, adaptive integration and feature selection via genetic programming, and model development. The experimental results demonstrated that the integrated multi-omics approach yielded a concordance index (C-index) of 78.31 during 5-fold cross-validation on the training set and 67.94 on the test set. These findings highlight the importance of considering the complex interplay between different molecular layers in breast cancer and provide a flexible and scalable approach that can be extended to other cancer types [3].

Schizophrenia Risk Stratification

Schizophrenia (SCZ) is a complex psychiatric disorder with heterogeneous molecular underpinnings that remain poorly resolved by conventional single-omics approaches. To address this gap, researchers applied an AI-driven multi-omics framework to an open access dataset comprising plasma proteomics, post-translational modifications (PTMs), and metabolomics to systematically dissect SCZ pathophysiology. In a cohort of 104 individuals, comparative analysis of 17 machine learning models revealed that multi-omics integration significantly enhanced classification performance, reaching a maximum AUC of 0.9727 using LightGBMXT, compared to 0.9636 with CNNBiLSTM for proteomics alone. Interpretable feature prioritization identified carbamylation at immunoglobulin-constant region sites IGKCK20 and IGHG1K8, alongside oxidation of coagulation factor F10 at residue M8, as key discriminative molecular events. Functional analyses identified significantly enriched pathways including complement activation, platelet signaling, and gut microbiota-associated metabolism. These results implicate immune–thrombotic dysregulation as a critical component of SCZ pathology, with PTMs of immune proteins serving as quantifiable disease indicators [5].

Influenza Progression Monitoring

A multi-omics approach to studying influenza A virus (IAV) infection in mice provided valuable insights into the dynamic biomarkers of disease progression. Researchers conducted a comprehensive evaluation of physiological and pathological parameters in Balb/c mice infected with H1N1 influenza over a 14-day period. They employed the DIABLO multi-omics integration method to analyze dynamic changes in the lung transcriptome, metabolome, and serum metabolome from mild to severe stages of infection. The analysis highlighted the critical importance of intervention within the first 6 days post-infection to prevent severe disease and identified several novel biomarkers associated with disease progression, including Ccl8, Pdcd1, Gzmk, kynurenine, L-glutamine, and adipoyl-carnitine. Additionally, the team developed a serum-based influenza disease progression scoring system that serves as a valuable tool for early diagnosis and prognosis of severe influenza [6].

Experimental Protocols and Methodologies

Protocol 1: Multi-Omics Integration Framework for Disease Classification

This protocol outlines a comprehensive framework for integrating multiple omics datasets to classify disease states and identify biomarker signatures, adapted from successful applications in schizophrenia and inflammatory bowel disease research [4] [5].

Sample Preparation and Data Generation

- Collect appropriate biological samples (tissue, blood, plasma) from well-characterized patient cohorts and matched controls

- Extract and quantify molecular components using standardized protocols for each omics layer:

- Genomics: Perform whole genome or exome sequencing using Illumina platforms

- Transcriptomics: Conduct RNA sequencing with library preparation using TruSeq kits

- Proteomics: Prepare samples for LC-MS/MS analysis with appropriate digestion and cleanup

- Metabolomics: Use LC-MS platforms with quality control pools and internal standards

- Process raw data through established pipelines: alignment to reference genomes for sequencing data, peak identification and quantification for MS data

Data Preprocessing and Quality Control

- Perform normalization within each omics dataset using appropriate methods (e.g., quantile normalization, variance stabilizing transformation)

- Handle missing values using imputation methods (e.g., k-nearest neighbors, random forest) or exclusion based on predefined thresholds

- Conduct batch effect correction using ComBat or similar algorithms when multiple batches are present

- Apply quality control metrics specific to each data type:

- Sequencing data: check sequencing depth, alignment rates, GC content

- MS data: evaluate retention time stability, peak intensity distributions, signal-to-noise ratios

Multi-Omics Integration and Model Building

- Implement feature selection within each omics dataset to reduce dimensionality (e.g., removing low-variance features, selecting top features by ANOVA)

- Choose an integration strategy based on research question:

- Early integration: Concatenate selected features from all omics layers into a single matrix

- Intermediate integration: Use methods like DIABLO or MOFA to integrate datasets while preserving their structure

- Late integration: Build separate models for each omics layer and combine predictions

- Train multiple machine learning models (e.g., random forest, XGBoost, neural networks) using cross-validation

- Optimize hyperparameters through grid search or automated machine learning frameworks

Validation and Interpretation

- Evaluate model performance on held-out test sets using metrics appropriate for the task (AUC-ROC for classification, C-index for survival)

- Perform permutation testing to assess statistical significance of model performance

- Interpret important features using model-specific explanation methods (SHAP, permutation importance)

- Validate findings in independent cohorts when available

- Conduct functional enrichment analysis on identified biomarkers to assess biological relevance

Protocol 2: Dynamic Multi-Omics Profiling in Disease Progression

This protocol describes an approach for capturing dynamic changes in multi-omics profiles during disease progression, with applications in infectious disease and cancer research [6].

Longitudinal Study Design

- Establish appropriate animal models or identify patient cohorts for longitudinal sampling

- Define critical time points for sample collection based on known disease progression patterns

- For animal studies: include appropriate controls (e.g., sham-treated, vehicle-treated) at each time point

- Determine sample size considering expected effect sizes and multiple testing burden

Sample Collection and Processing

- Collect multiple sample types at each time point (e.g., blood, tissue, other relevant biofluids)

- Process samples immediately or flash-freeze in liquid nitrogen to preserve molecular integrity

- For transcriptomics: use RNA stabilization reagents to prevent degradation

- For metabolomics: employ quenching protocols to arrest metabolic activity rapidly

Multi-Omics Data Generation

- Process samples for each omics platform using standardized protocols

- Include quality control samples throughout the analytical batch:

- Pooled quality control samples for metabolomics and proteomics

- Generate data for all omics layers using consistent analytical conditions across time points

Data Integration and Dynamic Biomarker Identification

- Preprocess each omics dataset individually with appropriate normalization and quality control

- Use multivariate methods like DIABLO to identify correlated omics features across time points

- Apply trajectory analysis to identify patterns of change across the time course

- Construct temporal networks to visualize how molecular relationships evolve during progression

- Identify early-warning biomarkers that signal transitions between disease stages

Visualization and Interpretation

- Create heatmaps with temporal patterns to visualize dynamic changes

- Generate network diagrams showing how molecular interactions shift over time

- Develop integrated scoring systems that combine information from multiple omics layers

- Correlate molecular dynamics with clinical or pathological parameters

Multi-Omics Integration Workflow

Successful multi-omics research requires both wet-lab reagents for data generation and dry-lab tools for computational analysis. The following table details essential resources for implementing the protocols described in this article.

Table 3: Essential Research Reagents and Computational Tools for Multi-Omics Studies

| Category | Specific Tools/Reagents | Function/Application | Key Features |

|---|---|---|---|

| Wet-Lab Reagents | TruSeq RNA Library Prep Kit | Transcriptomics: RNA-seq library preparation | Compatibility with low-input samples; strand-specific information [1] |

| QIAGEN DNeasy Blood & Tissue Kit | Genomics: DNA extraction from various samples | High-quality DNA suitable for WGS and WES [1] | |

| ProteoExtract Protein Extraction Kit | Proteomics: Protein isolation and digestion | Compatibility with MS analysis; maintains PTMs [5] | |

| BioVision Metabolite Extraction Kit | Metabolomics: Metabolite extraction from biofluids | Comprehensive coverage of metabolite classes [6] | |

| EZ DNA Methylation Kit | Epigenomics: Bisulfite conversion for methylation studies | Efficient conversion with minimal DNA degradation [1] | |

| Computational Tools | DIABLO R Package | Multi-omics integration | Discriminant analysis for multiple datasets; biomarker identification [6] |

| MOFA+ (Python/R) | Multi-omics factor analysis | Identifies latent factors across omics layers; handles missing data [3] | |

| AutoGluon | Automated machine learning | Automated model selection and hyperparameter tuning [5] | |

| SHAP (SHapley Additive exPlanations) | Model interpretation | Explains individual predictions; identifies feature importance [5] | |

| Cytoscape | Network visualization and analysis | Visualizes molecular interaction networks; plugin ecosystem [5] |

The evolution from single biomarkers to multi-omics integration represents a fundamental transformation in how we approach disease research and clinical applications. This paradigm shift has enabled a more comprehensive understanding of disease mechanisms, moving beyond isolated molecular events to capture the complex interactions across biological layers that drive disease pathogenesis and progression. The integration of genomics, transcriptomics, proteomics, metabolomics, and epigenomics has proven particularly valuable for addressing the challenges of disease heterogeneity, enabling the identification of molecular subtypes with distinct clinical trajectories and therapeutic responses.

Looking ahead, several emerging technologies and methodologies promise to further advance the field of multi-omics research. Single-cell multi-omics technologies are rapidly evolving, allowing researchers to profile multiple molecular layers simultaneously within individual cells. This approach provides unprecedented resolution for characterizing cellular heterogeneity and identifying rare cell populations that may play critical roles in disease processes. Similarly, spatial multi-omics technologies enable the preservation of spatial context during molecular profiling, offering insights into how cellular organization and tissue architecture influence disease development and progression. These technologies are particularly valuable for understanding the tumor microenvironment in cancer and the complex cellular interactions in inflammatory and neurological disorders [1].

The integration of artificial intelligence and machine learning with multi-omics data will continue to drive innovation in biomarker discovery and disease stratification. As demonstrated in the schizophrenia and breast cancer examples, AI-driven approaches can identify complex patterns across omics layers that may not be apparent through traditional statistical methods. Future developments in explainable AI will be particularly important for enhancing the interpretability and clinical translatability of these models. Additionally, the incorporation of real-world data and digital health technologies, such as wearable sensors and mobile health applications, may enable the correlation of multi-omics profiles with dynamic changes in clinical symptoms and physiological parameters, creating a more comprehensive picture of disease states [7] [5].

Despite these exciting advancements, important challenges remain in the field of multi-omics research. Technical challenges include the need for improved methods for integrating heterogeneous data types, handling batch effects, and managing the computational complexity of analyzing high-dimensional datasets. Biological challenges include understanding the temporal dynamics of molecular changes and distinguishing causal drivers from secondary effects in disease networks. Clinical challenges include the translation of multi-omics findings into validated diagnostic tests and the demonstration of clinical utility through prospective trials. Furthermore, the increasing complexity of multi-omics studies raises important ethical considerations regarding data sharing, patient privacy, and the appropriate interpretation and communication of results [1] [8].

As multi-omics technologies continue to evolve and become more accessible, they hold the promise of transforming clinical practice through more precise disease classification, earlier detection, and personalized treatment strategies. The integration of multi-omics data into clinical trials, as facilitated by frameworks like the SPIRIT 2025 guidelines for trial protocols, will be essential for validating the clinical utility of multi-omics biomarkers and advancing the field of precision medicine [9]. By embracing the complexity of biological systems through integrated approaches, researchers and clinicians can work toward a future where disease prevention, diagnosis, and treatment are tailored to the unique molecular characteristics of each individual and their disease.

Complex diseases such as cancer, autoimmune disorders, and metabolic conditions represent a significant challenge in modern healthcare due to their heterogeneous nature. Traditional disease classifications based solely on clinical symptoms or single biomarkers often fail to capture the underlying molecular diversity, leading to suboptimal treatment outcomes. The emerging paradigm of precision medicine addresses this challenge through deep molecular stratification, leveraging three fundamental concepts: molecular fingerprints, handprints, and endotypes. These concepts form the cornerstone of a computational framework that enables researchers to deconstruct complex diseases into biologically distinct subgroups. By integrating multilevel data from genomic, transcriptomic, proteomic, and metabolomic platforms, this approach facilitates the identification of precise molecular signatures that correspond to specific disease mechanisms, clinical trajectories, and therapeutic responses [10] [11]. The ultimate goal is to transition from a one-size-fits-all treatment model to tailored therapeutic strategies that target the specific molecular drivers of disease in individual patients [12].

Molecular fingerprints represent the foundational layer in this stratification hierarchy, capturing disease-associated patterns from individual data platforms. The integration of multiple fingerprints creates composite handprints that provide a more comprehensive view of the disease state. These molecular signatures ultimately enable the identification of endotypes—distinct disease subtypes defined by specific biological mechanisms rather than clinical presentation alone. This conceptual framework is transforming both drug development and clinical practice by embedding our knowledge of disease etiology into research design and therapeutic decision-making [11] [12]. The following sections provide detailed definitions, methodologies, and applications of these core concepts within computational frameworks for complex disease stratification.

Core Definitions and Conceptual Framework

Molecular Fingerprints: Single-Platform Biomarker Signatures

Molecular fingerprints are defined as biomarker signatures derived from data collected from a single technological platform [11]. They represent a defined characteristic measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention [12]. Mathematically, fingerprints convert complex molecular structures or biological measurements into consistent machine-readable formats, typically as vectors or bitstrings, enabling quantitative comparison and analysis [13] [14].

In the context of complex diseases, fingerprints can capture various molecular features:

- Gene expression patterns from transcriptomic analyses

- DNA methylation profiles from epigenomic studies

- Protein abundance measurements from proteomic platforms

- Metabolite concentrations from metabolomic assays

- Structural motifs from chemical compound analyses [13] [11] [14]

The generation of molecular fingerprints involves transforming raw molecular data into standardized representations that preserve essential biological information while enabling computational analysis. For chemical compounds, this might involve representing structures as binary vectors indicating the presence or absence of specific substructures [13] [14]. For omics data, fingerprints typically represent normalized measurements of molecular abundance or activity across a defined set of features [11].

Handprints: Multi-Platform Integration of Molecular Data

Handprints represent the logical evolution beyond single-platform fingerprints, defined as biomarker signatures derived from data collected within multiple technical platforms, either by fusion of multiple fingerprints or by direct integration of several data types [11]. Where fingerprints provide a one-dimensional view of a biological system, handprints offer a multi-dimensional perspective that more accurately reflects the complexity of disease pathophysiology.

The conceptual foundation of handprints rests on the understanding that complex diseases rarely arise from aberrations in a single molecular platform but rather from interactions across multiple biological layers. For example, the integration of mRNA expression, DNA methylation, and miRNA expression data can generate clusters of cancer patients with distinct clinical outcomes that would not be apparent when analyzing any single data type in isolation [11]. This approach aligns with the systems medicine rationale, which studies biological organisms as complete and complex systems by integrating various sources of information [11].

Endotypes: Mechanistically Distinct Disease Subtypes

Endotypes represent distinct disease subtypes characterized by specific functional or pathobiological mechanisms, beyond mere clinical presentation [11]. Unlike phenotypes, which represent observable characteristics, endotypes capture the complex causative mechanisms in disease, providing a mechanistic basis for patient stratification [11]. The identification of endotypes is particularly valuable in heterogeneous clinical conditions where patients with similar symptoms may have different underlying disease processes and, consequently, different responses to therapy.

The relationship between fingerprints, handprints, and endotypes forms a logical progression in disease stratification: molecular fingerprints from individual platforms are integrated to form handprints, which in turn enable the identification of mechanistically distinct endotypes. This stratification approach moves beyond traditional classification systems based solely on clinical presentation to define disease subtypes by their underlying biology, with profound implications for targeted therapeutic development [11] [12].

Computational Framework for Disease Stratification

The identification of molecular fingerprints, handprints, and endotypes follows a structured computational framework comprising four major steps: dataset subsetting, feature filtering, omics-based clustering, and biomarker identification [10] [11]. This framework provides a systematic approach for analyzing complex, multi-scale biological data to identify clinically relevant patient subgroups. The overall workflow integrates multiple data types through a series of analytical steps that transform raw molecular measurements into clinically actionable stratification schemas, enabling the implementation of translational P4 medicine (predictive, preventive, personalized, and participatory) [11].

Table 1: Key Steps in the Computational Stratification Framework

| Step | Description | Methods | Output |

|---|---|---|---|

| Data Preparation | Quality control, normalization, and handling of missing data | Principal Component Analysis (PCA), ComBat for batch effect correction, multiple imputation | Curated, analysis-ready datasets |

| Dataset Subsetting | Selecting relevant patient subgroups and molecular features | Clinical criteria, molecular thresholds | Focused datasets for analysis |

| Feature Filtering | Identifying statistically significant molecular features | Hypothesis testing, false discovery rate correction | Candidate biomarkers |

| Omics-Based Clustering | Identifying patient subgroups based on molecular profiles | K-means, hierarchical clustering, validation with WB-ratio, Dunn index, Silhouette width | Molecular fingerprints and handprints |

| Biomarker Identification | Selecting features that define clusters | Differential expression, multivariate analysis | Validated fingerprints and handprints |

| Endotype Validation | Linking molecular clusters to clinical outcomes | Survival analysis, treatment response assessment | Clinically relevant endotypes |

Data Preparation and Quality Control

The initial data preparation phase is critical for generating reliable molecular fingerprints. This involves platform-specific technical quality control and normalization according to the standards of each technological platform [11]. Key considerations include:

Batch Effect Correction: Technical biases arising from variability in production platforms, staff, batches, or reagent lots must be identified and corrected. Tools such as ComBat and methodologies developed by van der Kloet can adjust for batch effects when necessary [11]. Descriptive methods like Principal Component Analysis (PCA) provide visual assessment of batch effects before and after correction.

Missing Data Handling: Missing values are addressed through imputation (mean, mode, mean of nearest neighbors, or multiple imputation) or deletion, depending on the pattern of missingness [11]. For mass spectrometry data where missing values often exceed 10%, a careful process distinguishing data missing completely at random from those below the lower limit of quantitation is implemented [11].

Outlier Management: Outliers arising from technical artifacts are discarded, while biological outliers are retained, flagged, and subjected to statistical analysis. The robustness of these decisions is assessed through re-analysis using different methodological approaches [11].

Advanced Stratification with ClustAll Framework

For more advanced stratification tasks, the ClustAll package provides a comprehensive implementation of the computational framework for complex disease stratification [15]. This Bioconductor package is specifically designed to handle intricacies in clinical data, including mixed data types, missing values, and collinearity. The ClustAll workflow involves three main steps:

Data Complexity Reduction (DCR): Multiple data embeddings are created to replace highly correlated variables with lower-dimension projections derived from Principal Component Analysis (PCA). This process explores all relevant groupings derived from a hierarchical clustering-based dendrogram, computing an embedding for each depth in the dendrogram [15].

Stratification Process (SP): The algorithm calculates and preliminarily evaluates stratifications for each embedding by computing a stratification for each feasible combination of embedding, dissimilarity metric, and clustering method across a predefined range of cluster numbers (default 2 to 6). The optimal number of clusters is determined using three internal validation measures: the sum-of-squares (WB-ratio), Dunn index, and average Silhouette width [15].

Consensus-based Stratifications (CbS): Non-robust stratifications are filtered out using bootstrapping, with stratifications demonstrating stability below 85% being excluded. From the remaining robust stratifications, representative outcomes are selected based on similarity using the Jaccard index as the distance metric [15].

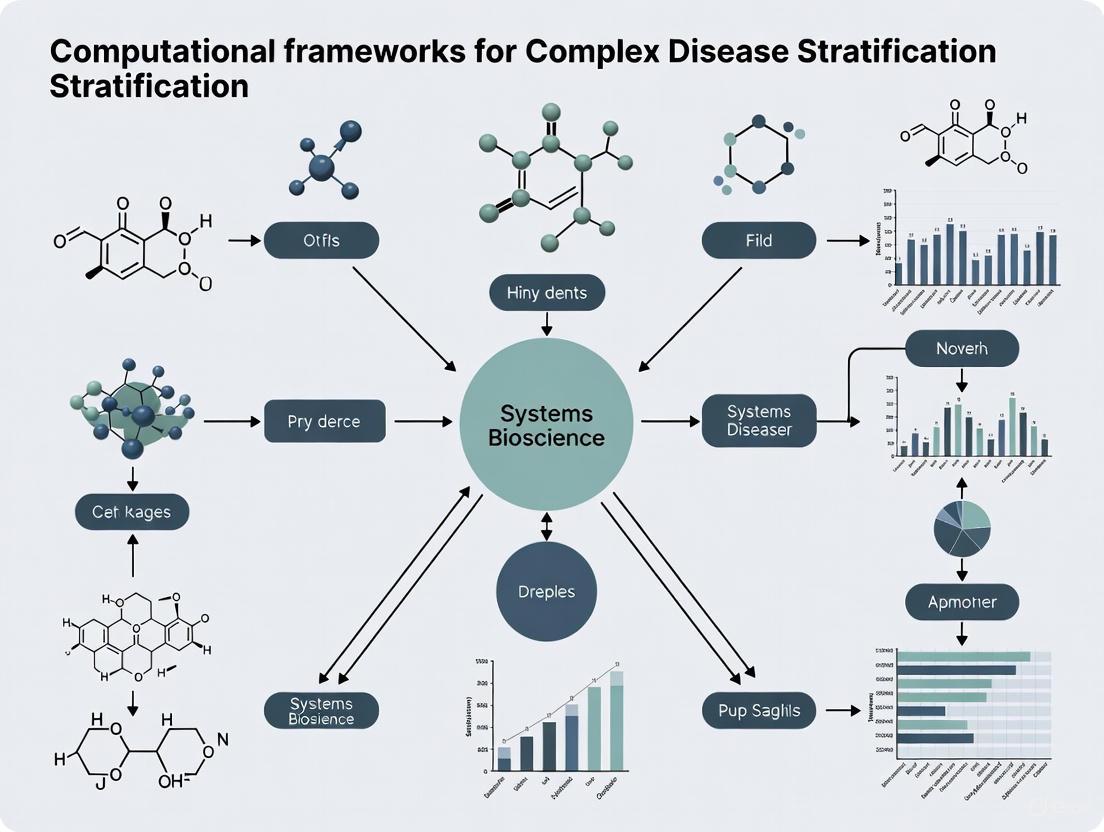

The following diagram illustrates the comprehensive ClustAll workflow, including both the core stratification process and the interpretation modules:

Molecular Fingerprints: Technical Specifications and Generation Protocols

Types of Molecular Fingerprints and Their Applications

Molecular fingerprints can be categorized into distinct types based on the molecular information they capture and their generation algorithms. Understanding these categories is essential for selecting appropriate fingerprints for specific research applications in complex disease stratification.

Table 2: Categories of Molecular Fingerprints and Their Characteristics

| Fingerprint Category | Description | Key Algorithms | Applications in Disease Stratification |

|---|---|---|---|

| Dictionary-Based (Structural Keys) | Each bit represents presence/absence of predefined functional groups or substructures | MACCS, PubChem fingerprints, BCI fingerprints | Rapid filtering and search for molecular structures in chemical databases |

| Circular Fingerprints | Capture novel circular fragments by extending from each atom to neighbors iteratively | ECFP, FCFP, Molprint2D/3D | Representing complex structures like natural products; capturing local atomic environments |

| Topological (Path-Based) | Analyze paths through molecular graph between atom pairs | Daylight fingerprints, Atom Pairs, Topological Torsion | Encoding chemical information and molecular graphs for QSAR modeling |

| Pharmacophore Fingerprints | Encode chemical functionalities expected to contribute to ligand-receptor binding | 3-point PharmPrint, 4-point pharmacophore fingerprints | Capturing essential interaction information for drug-receptor interactions |

| Protein-Ligand Interaction | Represent binding patterns between receptors and ligands | Structural Interaction Fingerprints (SIFt) | Comparing protein-ligand interaction specificity and binding modes |

Protocol for Generating Extended-Connectivity Fingerprints (ECFPs)

Extended-Connectivity Fingerprints (ECFPs) represent one of the most widely used circular fingerprint algorithms in chemical biology and drug discovery. The following protocol details the steps for generating ECFPs for compound analysis in disease stratification research:

Materials and Reagents

- Chemical compounds in standardized format (SMILES, SDF, or MOL files)

- Computational environment (Python with RDKit library or similar cheminformatics toolkit)

- Hardware: Standard computer with sufficient RAM (8GB minimum, 16GB recommended) for processing large compound libraries

Procedure

- Compound Standardization: Input chemical structures are standardized through solvent exclusion, salt removal, and charge neutralization using the ChEMBL structure curation package or similar tools [13]. Compounds that fail standardization or have unparsable SMILES are removed from subsequent analysis.

Atom Identifier Assignment: Initialize each non-hydrogen atom with an integer identifier based on atomic properties including atomic number, atomic charge, bond order, and atomic connectivity [14].

Iterative Neighborhood Expansion: For each atom, generate a fragment identifier by combining its current identifier with those of its immediate neighbors. This process is repeated for a specified number of iterations (typically 2-6, referred to as the "radius" parameter) [16] [14].

Feature Hashing: Apply a hashing function to each fragment identifier to generate a corresponding integer value. This value is then mapped to a position in a fixed-length bit vector (typically 1024, 2048, or 4096 bits) by modulo operation using the vector length [16].

Bit Vector Population: Set the corresponding bits in the fingerprint vector to 1 for all hashed positions generated in the previous step. The result is a binary vector where each bit represents the presence (1) or absence (0) of specific molecular fragments in the compound [13] [14].

Validation and Quality Control: Verify fingerprint generation by testing with known benchmark compounds and comparing with reference implementations. Assess the discrimination power of generated fingerprints using similarity searching and clustering experiments [13].

Applications in Disease Stratification ECFPs have demonstrated particular utility in representing natural products, which often exhibit complex structural motifs including multiple stereocenters, higher fractions of sp³-hybridized carbons, and extended ring systems [13]. These structural characteristics differentiate natural products from typical drug-like compounds and make them challenging to encode with simpler dictionary-based fingerprints. The dynamic generation of molecular features in ECFPs enables effective capture of these complex structural patterns, facilitating the identification of bioactive natural products with potential therapeutic applications for complex diseases [13].

Experimental Protocols for Generating Disease Handprints

Multi-Omics Data Integration Protocol

The generation of handprints through multi-omics data integration requires careful experimental design and computational execution. The following protocol outlines the key steps for creating handprints from multiple molecular data platforms:

Materials and Reagents

- Matched patient samples with multiple omics data types (e.g., genomic, transcriptomic, proteomic)

- High-performance computing infrastructure for large-scale data integration

- Statistical software environment (R, Python with pandas/scikit-learn)

- Specialized integration tools (ClustAll package, mixOmics, MOFA)

Procedure

- Data Collection and Preprocessing: Collect matched multi-omics datasets from the same patient cohort. Perform platform-specific quality control, normalization, and batch effect correction for each data type individually [11]. Ensure consistent patient identifiers across all datasets.

Feature Selection: For each omics platform, identify statistically significant features associated with the disease phenotype of interest. Apply false discovery rate correction to account for multiple testing. Retain features meeting significance thresholds (e.g., p-value < 0.05 after FDR correction) for integration [11].

Data Transformation: Convert selected features from each platform into molecular fingerprints using appropriate representation methods (e.g., z-score normalization, presence/absence encoding, or quantitative abundance measures) [11].

Similarity Matrix Construction: Calculate patient-to-patient similarity matrices for each molecular fingerprint type using appropriate distance metrics (e.g., Euclidean distance for continuous data, Jaccard distance for binary data, or Gower's distance for mixed data types) [15].

Similarity Network Fusion: Integrate similarity matrices from multiple platforms using techniques such as Similarity Network Fusion (SNF) or kernel fusion methods. This creates a unified patient similarity network that captures shared patterns across omics platforms [11].

Cluster Identification: Apply community detection algorithms or clustering methods to the fused similarity network to identify patient subgroups. Validate cluster stability using bootstrapping approaches and internal validation measures [15].

Handprint Definition: Characterize each patient cluster by the combination of molecular features across platforms that define the subgroup. These multi-platform signatures constitute the disease handprints [11].

Clinical Validation: Associate handprints with clinical outcomes such as disease progression, treatment response, or survival to establish clinical relevance [11].

Case Study: Ovarian Cancer Stratification

A practical implementation of this protocol was demonstrated in a study using the TCGA Ovarian serous cystadenocarcinoma (OV) dataset [11]. The analysis integrated mRNA expression, DNA methylation, and miRNA expression data to identify molecular handprints that defined patient subgroups with distinct clinical outcomes. The study generated a higher number of stable and clinically relevant clusters than previously reported, enabling the development of predictive models of patient outcomes [11]. This case study highlights the power of handprint-based stratification to reveal disease heterogeneity that would remain undetected when analyzing individual molecular platforms in isolation.

Successful implementation of molecular fingerprint and handprint analyses requires specific computational tools and resources. The following table details essential components of the research toolkit for complex disease stratification studies:

Table 3: Essential Research Resources for Molecular Fingerprinting and Stratification

| Resource Category | Specific Tools/Resources | Application Context | Key Features |

|---|---|---|---|

| Cheminformatics Tools | RDKit, OpenBabel, CDK | Generating molecular fingerprints for chemical compounds | Support for multiple fingerprint algorithms, standardized molecular representation |

| Omics Analysis Platforms | ClustAll, mixOmics, MOFA | Multi-omics data integration and handprint generation | Handling of mixed data types, missing values, collinearity |

| Molecular Databases | COCONUT, CMNPD, ChEMBL, DrugBank | Source of natural products and bioactive compounds for fingerprint analysis | Curated collections with structural and bioactivity data |

| Programming Environments | R (> = 4.2), Python with pandas/scikit-learn | Implementation of custom analysis pipelines | Extensive statistical and machine learning libraries |

| Similarity Metrics | Jaccard-Tanimoto, Euclidean distance, Gower's distance | Comparing fingerprints and calculating patient similarities | Appropriate for different data types (binary, continuous, mixed) |

| Clustering Algorithms | K-means, hierarchical clustering, consensus clustering | Identifying patient subgroups based on molecular fingerprints | Multiple method options with validation measures |

| Visualization Tools | complexHeatmap, networkD3, TMAP | Exploring and presenting stratification results | Interactive visualization of complex relationships |

The concepts of molecular fingerprints, handprints, and endotypes represent a fundamental framework for addressing disease heterogeneity in the era of precision medicine. By providing standardized approaches for representing molecular features at single-platform and multi-platform levels, these concepts enable researchers to deconstruct complex diseases into biologically distinct subgroups with shared underlying mechanisms. The computational frameworks and experimental protocols outlined in this document provide practical guidance for implementing these approaches in disease stratification research.

Looking forward, several emerging trends are likely to shape the future development of these concepts. The increasing availability of real-world data from comprehensive genome profiling and other next-generation technologies creates opportunities for expanding fingerprint and handprint analyses to larger and more diverse patient populations [12]. Similarly, advances in artificial intelligence and machine learning are enabling more sophisticated integration of multi-omics data, potentially revealing novel biological insights into disease mechanisms [12]. The growing emphasis on biomarker-driven drug development further underscores the importance of these stratification approaches for identifying patient subgroups most likely to benefit from targeted therapies [12].

As these technologies and methodologies continue to evolve, the systematic application of molecular fingerprints, handprints, and endotype identification promises to transform our understanding of complex diseases and accelerate the development of personalized therapeutic strategies tailored to individual patients' molecular profiles.

The complexity of human diseases necessitates a systems-level approach to understand their underlying mechanisms. Multi-omics data integration has emerged as a powerful paradigm for elucidating the intricate interactions between various biological layers, from genetic predispositions to metabolic outcomes. This approach combines datasets across genomics, transcriptomics, proteomics, metabolomics, and epigenomics to provide a holistic view of biological systems and disease pathophysiology [17]. For complex disease stratification, multi-omics profiling enables the identification of distinct molecular subtypes that may respond differently to therapies, thereby paving the way for precision medicine approaches tailored to individual patient profiles [11] [18].

The integration of these diverse datatypes presents both unprecedented opportunities and significant computational challenges. High dimensionality, data heterogeneity, and technical variability require sophisticated analytical frameworks to extract biologically meaningful insights [2]. This application note provides a comprehensive overview of the multi-omics data landscape, detailed protocols for data integration, and practical tools for researchers aiming to implement these approaches in complex disease stratification research.

Publicly available repositories house vast amounts of multi-omics data, serving as invaluable resources for the research community. These databases provide standardized, well-annotated datasets that enable large-scale integrative analyses. The table below summarizes key multi-omics data repositories relevant to complex disease research.

Table 1: Major Public Repositories for Multi-Omics Data

| Repository Name | Primary Disease Focus | Data Types Available | Sample Scope |

|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | Cancer | RNA-Seq, DNA-Seq, miRNA-Seq, SNV, CNV, DNA methylation, RPPA [18] | >20,000 tumor samples across 33 cancer types [18] |

| Cancer Cell Line Encyclopedia (CCLE) | Cancer | Gene expression, copy number, sequencing data, pharmacological profiles [18] | 947 human cancer cell lines across 36 tumor types [18] |

| International Cancer Genomics Consortium (ICGC) | Cancer | Whole genome sequencing, somatic and germline mutations [18] | 20,383 donors across 76 cancer projects [18] |

| METABRIC | Breast cancer | Clinical traits, gene expression, SNP, CNV [18] | Breast tumor samples with clinical outcomes [18] |

| TARGET | Pediatric cancers | Gene expression, miRNA expression, copy number, sequencing data [18] | Various childhood cancer samples [18] |

| Omics Discovery Index (OmicsDI) | Consolidated diseases | Genomics, transcriptomics, proteomics, metabolomics [18] | Consolidated datasets from 11 repositories [18] |

Computational Frameworks for Multi-Omics Data Integration

Conceptual Framework for Complex Disease Stratification

A robust computational framework for complex disease stratification typically involves multiple coordinated steps, from data preparation to biomarker identification. The foundational framework proposed by De Meulder et al. (2018) outlines four major phases: dataset subsetting, feature filtering, omics-based clustering, and biomarker identification [11] [10]. This framework facilitates the generation of single and multi-omics signatures of disease states, enabling researchers to identify molecularly distinct patient subgroups with clinical relevance.

Flexible Deep Learning Approaches

Recent advances in deep learning have produced more flexible frameworks for multi-omics integration. Flexynesis, a recently developed deep learning toolkit, addresses several limitations of previous approaches by offering modular architectures, automated hyperparameter tuning, and support for multiple analytical tasks including regression, classification, and survival modeling [19]. This tool enables both single-task modeling (predicting one outcome variable) and multi-task modeling (jointly predicting multiple outcome variables), allowing researchers to build models that reflect the complexity of biological systems [19].

Table 2: Computational Methods for Multi-Omics Integration

| Method Type | Representative Tools | Key Features | Best Suited Applications |

|---|---|---|---|

| Deep Learning | Flexynesis [19], DCCA [20], scMVAE [20] | Handles non-linear relationships, flexible architectures | Drug response prediction, survival analysis, biomarker discovery |

| Matrix Factorization | MOFA+ [20] | Identifies latent factors representing shared variance across omics | Patient stratification, data visualization |

| Manifold Alignment | UnionCom [20], Pamona [20] | Projects different omics data onto common latent space | Unmatched sample integration |

| Variational Autoencoders | GLUE [20], Cobolt [20], MultiVI [20] | Uses prior biological knowledge to link omics | Triple-omic integration, mosaic integration |

| Clustering Frameworks | ClustAll [21] | Handles mixed data types, missing values, identifies multiple stratifications | Clinical data stratification, patient subtyping |

Experimental Protocols for Multi-Omics Integration

Protocol 1: Patient Stratification Using Multi-Omics Data

Application: Identification of disease subtypes from matched multi-omics data.

Workflow Overview:

- Data Acquisition and Preprocessing

- Obtain matched multi-omics data (e.g., genomics, transcriptomics, epigenomics) from relevant repositories

- Perform platform-specific quality control and normalization

- Address batch effects using tools like ComBat [11]

- Impute missing values using appropriate methods (e.g., mean imputation for MCAR data, LLQ/√2 for values below detection limits) [11]

Feature Selection and Data Transformation

- Filter features based on quality metrics and variance

- Normalize data to make features comparable across platforms

- Reduce dimensionality using principal component analysis or other embedding techniques

Integrative Clustering

Clinical Validation and Biomarker Identification

- Associate clusters with clinical outcomes

- Identify differentially expressed features across clusters

- Perform pathway enrichment analysis to interpret biological significance

Multi-omics Patient Stratification Workflow

Protocol 2: Deep Learning-Based Predictive Modeling

Application: Predicting clinical outcomes (e.g., drug response, survival) from multi-omics data.

Workflow Overview:

- Data Preparation

- Collect multi-omics data with associated clinical outcomes

- Split data into training, validation, and test sets (typically 70%/15%/15%)

- Perform feature selection specific to each omics type

Model Configuration and Training

- Select appropriate architecture (fully connected, graph-convolutional encoders)

- Configure supervision heads based on task (classification, regression, survival)

- Implement hyperparameter optimization

- Train model with appropriate validation metrics

Model Evaluation and Interpretation

- Assess performance on test set using task-specific metrics

- Perform ablation studies to determine contribution of each omics type

- Identify important features contributing to predictions

- Validate on external datasets when available

Deep Learning Multi-task Prediction Architecture

Table 3: Essential Computational Tools for Multi-Omics Integration

| Tool/Resource | Category | Function | Access |

|---|---|---|---|

| Flexynesis [19] | Deep Learning Toolkit | Bulk multi-omics integration for classification, regression, survival analysis | PyPi, Guix, Bioconda, Galaxy Server |

| ClustAll [21] | R Package | Patient stratification from clinical and omics data, handles missing values | Bioconductor |

| MOFA+ [20] | Factor Analysis | Identifies latent factors across multiple omics views | R/Python Package |

| Seurat [20] | Integration Toolkit | Weighted nearest-neighbor integration for single-cell multi-omics | R Package |

| GLUE [20] | Graph Variational Autoencoder | Integrates unmatched omics data using prior knowledge | Python Package |

| TCGA [18] | Data Repository | Comprehensive multi-omics data for various cancer types | Online Portal |

| CCLE [18] | Data Repository | multi-omics profiles of cancer cell lines with drug response | Online Portal |

Application Case Studies in Precision Oncology

Predicting Microsatellite Instability Status

Microsatellite instability (MSI) is a critical biomarker for immunotherapy response in cancer. Using Flexynesis, researchers demonstrated that MSI status can be predicted with high accuracy (AUC = 0.981) from gene expression and promoter methylation profiles alone, without requiring mutation data [19]. This approach enables MSI classification for samples with transcriptomic but no genomic sequencing data, expanding potential clinical applications.

Protocol Details:

- Data: TCGA pan-gastrointestinal and gynecological cancer samples

- Input Features: Gene expression and DNA methylation data

- Model Architecture: Fully connected encoders with classification supervisor

- Validation: Cross-validation and benchmarking against other architectures

Survival Risk Stratification in Glioma

Integrative analysis of lower grade glioma (LGG) and glioblastoma multiforme (GBM) patient samples enabled stratification of patients into distinct risk groups based on multi-omics profiles [19]. The model successfully separated test samples by median risk score, with significant separation in Kaplan-Meier survival curves.

Protocol Details:

- Data: TCGA LGG and GBM samples with survival endpoints

- Model: Cox Proportional Hazards loss function

- Training: 70% of samples for training, 30% for testing

- Output: Patient-specific risk scores for stratification

Challenges and Future Directions

Despite significant advances, multi-omics integration faces several challenges. Data heterogeneity, missing modalities, and computational complexity remain substantial hurdles [20]. The disconnect between different biological layers—for instance, when high gene expression doesn't correlate with protein abundance—complicates integration efforts [20]. Furthermore, clinical implementation requires robust validation and standardization.

Future directions include:

- Development of single-cell multi-omics integration methods

- Incorporation of spatial omics data for tissue context

- Improved interpretability of deep learning models

- Standardization of benchmarking practices

- Integration of electronic health records and environmental factors

Emerging approaches like transfer learning and bridge integration show promise for addressing these challenges, particularly for integrating datasets with partial overlap [20]. As technologies evolve and datasets expand, multi-omics integration will increasingly enable truly personalized approaches to complex disease management and treatment.

The contemporary healthcare landscape is undergoing a profound transformation, shifting from a reactive, disease-centric model to a proactive, wellness-oriented approach known as P4 medicine. This paradigm, championed by pioneers like Leroy Hood, is defined by its four core pillars: Predictive, Preventive, Personalized, and Participatory medicine [22] [23] [24]. P4 medicine represents the application of systems biology to human health, leveraging high-throughput technologies and advanced computational tools to create a holistic, data-driven understanding of individual wellness and disease [23]. Rather than merely treating illness after it manifests, P4 medicine focuses on predicting health risks, preventing disease onset, tailoring interventions to individual biological characteristics, and actively engaging patients in their health management [22].

A central consequence and enabler of this new medical model is the critical need for complex disease stratification. The traditional classification of diseases based on symptomatic presentation is insufficient for P4 medicine's goals. Instead, diseases must be reclassified into distinct molecular subtypes or endotypes based on their underlying causative mechanisms, a process essential for matching the right prevention strategy or therapy to the right patient [11] [23]. This stratification is powered by the integration of multilevel biological data, or multi-omics datasets, which capture information from genomics, transcriptomics, proteomics, and metabolomics, combined with clinical and environmental data [11] [10]. The ensuing sections will detail how each pillar of P4 medicine necessitates and benefits from sophisticated stratification approaches, and will provide a detailed experimental protocol for achieving this stratification in a research setting.

The Four Pillars of P4 Medicine and Their Stratification Imperatives

Predictive Medicine: Forecasting Health Trajectories

Predictive medicine utilizes advanced data analytics, including machine learning and artificial intelligence (AI), to anticipate disease onset and progression long before clinical symptoms appear [22] [25]. This proactive approach relies on the analysis of dense, dynamic personal data clouds that surround each individual, comprising billions of data points from genetic, molecular, clinical, and lifestyle sources [23] [26]. The predictive power of these models hinges on the identification of early-warning signals or biomarkers that indicate a perturbation in the biological networks that maintain health [22] [24].

- Stratification Need: To accurately predict an individual's health risks, the population must first be stratified into subgroups sharing common risk factors, genetic predispositions, or early molecular signatures. For instance, family genomics can stratify individuals to more readily identify disease-associated genes [24]. Predictive models are not one-size-fits-all; they must be built and validated on well-defined patient subgroups to achieve clinical utility. AI-driven health interventions for public health surveillance, including disease outbreak prediction and patient morbidity risk assessment, fundamentally depend on the initial stratification of populations and diseases into meaningful categories [22].

Preventive Medicine: Intervening Before Disease Onset

Preventive medicine within the P4 context aims to leverage predictive insights to implement targeted interventions that reduce disease risk and promote wellness [25]. This moves beyond generic health advice to highly specific actions tailored to an individual's stratified risk profile. Examples range from personalized vaccination strategies to preemptive drug therapies or lifestyle modifications designed to counteract a predicted pathological trajectory [22] [23].

- Stratification Need: Effective prevention requires adherence to the principles of population screening [26]. Stratification is the key to ensuring that preventive resources are allocated efficiently and effectively. It identifies which subpopulations stand to benefit most from a particular intervention and which may be subjected to unnecessary risk or cost. For example, stratifying common complex diseases like breast cancer into subtypes based on biomarkers enables the development of targeted preventive therapies for high-risk groups, thereby avoiding the costs and side effects of broad-spectrum interventions [23] [26].

Personalized Medicine: Tailoring Diagnostic and Therapeutic Strategies

Personalized medicine, often used interchangeably with precision medicine, involves customizing healthcare to the individual patient. This entails considering a person's unique genetic, environmental, and lifestyle factors when making diagnostic and therapeutic decisions [25]. The goal is to move away from the "average patient" model and instead provide the right treatment, at the right time, for the right person.

- Stratification Need: Personalization is impossible without prior stratification. Disease stratification is the process of dividing a condition, such as ovarian cystadenocarcinoma or Crohn's disease, into clinically relevant molecular subtypes [11] [23]. This allows for a precise "impedance match" between a patient's specific disease variant and the most effective drug [24]. Similarly, patient stratification groups individuals based on their likely response to an environmental challenge, such as a specific drug or toxin [24]. This dual stratification—of both disease and patient—is the cornerstone of truly personalized care, ensuring that interventions are not just customized but are also predictably effective.

Participatory Medicine: Engaging the Informed Individual

Participatory medicine acknowledges the patient as an active, informed partner in their own health management [25]. It is fueled by the digital revolution, which provides consumers with access to their health data, online information, and social networks [23]. This pillar empowers individuals to make lifestyle decisions based on personalized data and contributes to the collective knowledge pool through shared information.

- Stratification Need: The success of participatory medicine relies on a strong public health framework that can assess population needs, develop sound policies, and assure access to services [26]. From a data perspective, participatory medicine generates vast amounts of real-world data from mobile health applications and wearable devices. To be useful, this "big data" must be stratified and analyzed to extract meaningful, actionable insights for both individuals and populations. Furthermore, digital health initiatives must be designed to avoid amplifying healthcare disparities, ensuring that stratification does not lead to the exclusion of vulnerable groups who may have less access to technology [22] [26].

Table 1: The Core Pillars of P4 Medicine and Their Stratification Requirements

| Pillar | Core Objective | Required Stratification Type | Key Data Sources |

|---|---|---|---|

| Predictive | Anticipate disease risk and onset | Risk-based stratification | Genomic data, biomarker panels, clinical history, environmental exposure data |

| Preventive | Implement targeted interventions to maintain wellness | Intervention-response stratification | Multi-omics data for early signatures, lifestyle data, family history |

| Personalized | Tailor therapies to individual biology | Disease endotyping, Patient subgrouping | Tumor genomics, pharmacogenomic data, proteomic and metabolomic profiles |

| Participatory | Engage patients as active partners in health | Consumer segmentation, Digital phenotyping | Patient-reported outcomes, data from wearables and mobile apps, social network data |

A Computational Framework for Complex Disease Stratification

To operationalize the P4 vision, researchers require robust, standardized methods for stratifying complex diseases from large-scale, multilevel datasets. The following section outlines a comprehensive computational framework for this purpose, adapted from established methodologies [11] [10] [21].

This protocol provides a step-by-step guide for generating single and multi-omics signatures of disease states to identify potential patient clusters. The framework is divided into four major steps: dataset subsetting, feature filtering, omics-based clustering, and biomarker identification [11] [10]. The process enables the generation of predictive models of patient outcomes and facilitates the implementation of translational P4 medicine.

Materials and Reagents

Table 2: Research Reagent Solutions for Multi-Omics Stratification Analysis

| Item | Function / Description | Example Tools / Platforms |

|---|---|---|

| Multi-Omic Datasets | Integrated biological data from various molecular levels (e.g., genome, transcriptome, proteome). | The Cancer Genome Atlas (TCGA), UK Biobank, in-house generated datasets. |

| Bioinformatics Software | Platform for statistical computing, graphics, and data analysis. | R environment (>=4.2), Bioconductor packages. |

| Stratification Package | Specialized tool for unsupervised patient stratification from complex clinical data. | ClustAll R package [21]. |

| Data Imputation Tool | Handles missing data points, which are common in biological studies. | ComBat [11], MLE-based imputation methods. |

| Pathway Analysis Database | Contextualizes signatures with existing biological knowledge. | STRING database, KEGG, Gene Ontology (GO) [11]. |

Experimental Procedure

Step 1: Data Preparation and Quality Control

- Data Collection: Assemble multiple large-scale datasets, including clinical information and multi-omics data (e.g., genomic, transcriptomic, proteomic).

- Platform-specific QC and Normalization: Perform initial quality control and normalization according to the standards for each technological platform (e.g., RNA-seq, mass spectrometry) [11].

- Batch Effect Correction: Assess and correct for technical biases (e.g., different reagent lots, processing dates) using tools like

ComBat[11]. - Missing Data Handling: Critically appraise and handle missing data. For data missing completely at random (MCAR), use imputation (e.g., mean, nearest neighbours) or deletion. For values below the lower limit of quantitation (LLQ), impute to LLQ/√2 or use maximum likelihood estimation [11].

- Outlier Detection: Identify and flag technical outliers for potential removal, while retaining biological outliers for downstream analysis.

Step 2: Dataset Subsetting and Feature Filtering

- Define Analysis Cohorts: Subset the dataset based on relevant clinical phenotypes or experimental conditions pertinent to the research question.

- Feature Filtering: Apply statistical filters to reduce data dimensionality and identify relevant molecular features. The selection of methods is crucial and should be based on the data type and study design [11].

Step 3: Omics-Based Clustering and Stratification

This core step can be executed using a specialized package like ClustAll, which is designed to handle mixed data types, missing values, and collinearity [21].

- Object Creation: Create a

ClustAllObjectusing thecreateClustAllfunction, inputting a data frame or matrix of clinical and omics data. - Data Complexity Reduction (DCR): Run the DCR step to create multiple data embeddings. This process uses a dendrogram to group highly correlated variables and replaces them with lower-dimension projections from Principal Component Analysis (PCA) for each depth in the dendrogram [21].

- Stratification Process (SP): Execute the SP to calculate stratifications for each embedding. This involves testing feasible combinations of embeddings, dissimilarity metrics, and clustering methods across a predefined range of cluster numbers (e.g., 2 to 6). The optimal number of clusters is determined using internal validation measures [21].

- Robustness Assessment: Evaluate the robustness of each stratification using two criteria:

- Population-based robustness: Assess stability through bootstrapping.

- Parameter-based robustness: Assess stability under variations in parameters (e.g., dissimilarity metric, clustering method) [21].

Step 4: Biomarker Identification and Model Validation

- Biomarker Extraction: For the robust stratifications identified, extract the key molecular features (e.g., genes, proteins, metabolites) that define each cluster.

- Annotation and Pathway Analysis: Annotate these biomarkers and perform pathway or enrichment analysis to interpret the biological relevance of the identified patient subgroups [11].

- Predictive Model Generation: Use the stratification outcomes to generate predictive models of patient outcomes, such as survival or treatment response.

- External Validation: Validate the stratification model and its associated biomarkers using an independent, external dataset.

Workflow Visualization

The following diagram illustrates the logical flow and decision points within the computational stratification framework.

The P4 medicine paradigm, with its focus on prediction, prevention, personalization, and participation, is fundamentally reshaping the future of healthcare. As this article has demonstrated, the successful implementation of this new model is intrinsically linked to the ability to perform sophisticated complex disease stratification. The integration of multi-omics big data with advanced computational frameworks, such as the one detailed herein, allows researchers to deconstruct heterogeneous diseases into mechanistically distinct subtypes. This stratification is the critical bridge that connects the vast, personalized data clouds of individuals to actionable clinical decisions, enabling the matching of precise interventions to specific patient profiles. As these tools and methods continue to mature and become integrated into clinical practice, they will unlock the full potential of P4 medicine: to make healthcare more proactive, cost-effective, and focused on optimizing wellness for each individual.

The stratification of complex diseases represents a cornerstone of modern precision medicine. Conventional classifications, based solely on clinical phenotypes, often fail to capture the underlying molecular diversity, limiting therapeutic precision and patient outcomes [27]. Integrative multi-omics approaches—encompassing genomics, transcriptomics, proteomics, metabolomics, and clinical phenotyping—have emerged as a powerful paradigm to redefine disease mechanisms. By integrating high-dimensional molecular data, these approaches enable the identification of disease endotypes, biomarker discovery, and patient stratification, ultimately facilitating the development of personalized therapeutic strategies [11] [27].