Beyond Single Markers: How Systems Biology is Revolutionizing Biomarker Discovery and Precision Medicine

This article explores the paradigm shift from traditional reductionist biomarker approaches to holistic systems biology strategies in biomedical research and drug development.

Beyond Single Markers: How Systems Biology is Revolutionizing Biomarker Discovery and Precision Medicine

Abstract

This article explores the paradigm shift from traditional reductionist biomarker approaches to holistic systems biology strategies in biomedical research and drug development. It examines the foundational principles of both methodologies, detailing how systems biology integrates multi-omics data, computational modeling, and network analysis to decipher complex disease mechanisms. The content covers practical applications in areas from stem cell therapy to neurology and oncology, addresses key challenges in implementation, and provides a comparative validation of how this integrative framework enhances biomarker identification, patient stratification, and therapeutic development. Aimed at researchers and drug development professionals, this analysis synthesizes current evidence to illustrate how systems-level thinking is overcoming the limitations of single-target hypotheses for complex diseases.

From Isolated Parts to Interacting Networks: Core Principles of Reductionist vs. Systems Approaches

Table of Contents

- Philosophical and Methodological Foundations

- Comparative Analysis: Performance and Applications

- Experimental Protocols in Practice

- Visualizing the Workflows

- The Scientist's Toolkit: Essential Research Reagents

Philosophical and Methodological Foundations

The pursuit of biological knowledge and therapeutic breakthroughs is guided by two dominant paradigms: reductionism and systems holism. The reductionist approach, a long-standing cornerstone of biological research, operates on the principle that complex systems can be understood by isolating and studying their individual components, such as a single gene, protein, or pathway [1]. This methodology has been instrumental in identifying specific molecular players in disease. In contrast, systems biology is an interdisciplinary field that posits that the properties of a biological system cannot be fully understood by the study of its parts in isolation [1]. It argues that complexity arises from the dynamic networks of interactions between these components, and it applies computational and mathematical methods to study these complex interactions as integrated wholes [1].

The evolution of these fields is closely tied to technological advancements. Reductionist methods often rely on targeted assays, such as PCR for gene expression or ELISA for protein quantification, which focus on a single data type. Systems biology, however, is powered by high-throughput multi-omics technologies—including genomics, transcriptomics, proteomics, and metabolomics—that generate massive, multidimensional datasets [1] [2] [3]. The inherent complexity of human biological systems and multifactorial diseases like cancer and Alzheimer's has revealed the limitations of a purely reductionist, "single-target" approach, which often proves inadequate for achieving sufficient efficacy in the clinic [1]. This has driven the emergence of systems biology as a novel, innovative tool to tackle complex disease mechanisms and optimize drug discovery and development [1].

Comparative Analysis: Performance and Applications

The choice between reductionist and systems biology paradigms has profound implications for research outcomes, particularly in biomarker discovery and drug development. The table below summarizes a comparative analysis of the two approaches based on key performance indicators.

Table 1: Comparative Performance of Reductionist and Systems Biology Approaches

| Aspect | Reductionist Approach | Systems Biology Approach |

|---|---|---|

| Core Philosophy | Isolate and study single entities (e.g., a gene, protein) to understand the whole [1]. | Study the system as an integrated network of interacting components [1]. |

| Typical Data Type | Single-omics or targeted assays (e.g., PCR, ELISA) [2]. | Multi-omics (genomics, proteomics, metabolomics) and imaging data [2] [3]. |

| Handling of Complexity | Limited ability to capture multifaceted biological networks [2]. | Designed to address complexity and emergent properties of systems [1]. |

| Biomarker Discovery | Focus on single molecular features; faces challenges with reproducibility and predictive accuracy in complex diseases [2]. | Integrates diverse data to identify reliable, multi-component biomarker signatures; enables disease endotyping [2]. |

| Drug Development | "Single-target" drug development; less effective for complex diseases, leading to high clinical trial failure rates [1]. | Identifies combination therapies; matches right mechanism, dose, and patient population to increase probability of success [1]. |

| Key Strength | High precision for well-defined, single-factor problems; simpler experimental validation. | Superior for modeling complex, multifactorial diseases and predicting system-level responses [1]. |

| Primary Limitation | Inadequate for diseases driven by network dysregulation; higher risk of translational failure [1]. | Requires sophisticated computational infrastructure and expertise; challenges with model interpretability and uncertainty [2] [4]. |

Experimental Protocols in Practice

To illustrate these paradigms in action, below are generalized protocols for a typical biomarker discovery pipeline using each approach.

Protocol 1: Reductionist Approach for a Single-Protein Biomarker

This protocol aims to identify and validate a single protein biomarker, such as P-tau217 for Alzheimer's disease, from blood samples [5].

- Sample Collection and Processing: Collect blood plasma samples from clinically characterized cohorts (e.g., patients with cognitive impairment and healthy controls). Process blood to isolate plasma and aliquot for storage at -80°C.

- Targeted Assay (Simulated ELISA):

- Coating: Coat a 96-well plate with a capture antibody specific to the target protein (e.g., P-tau217).

- Blocking: Block remaining binding sites with a non-reactive protein (e.g., BSA).

- Sample Incubation: Add plasma samples and standards of known concentration to the wells. Incubate to allow the target antigen to bind the capture antibody.

- Detection Antibody Incubation: Add a detection antibody specific to a different epitope of the target protein. This antibody is conjugated to an enzyme (e.g., Horseradish Peroxidase).

- Signal Development: Add an enzyme substrate that produces a colorimetric or chemiluminescent signal proportional to the amount of target protein present.

- Data Acquisition: Measure the signal intensity using a plate reader.

- Data Analysis: Generate a standard curve from the known standards and calculate the concentration of the target protein in each unknown sample. Use statistical tests (e.g., t-test) to compare protein levels between patient and control groups.

Protocol 2: Systems Biology Approach for a Multi-Omics Biomarker Signature

This protocol leverages high-throughput technologies and machine learning to discover a composite biomarker signature from the same set of samples [1] [2] [3].

- Sample Collection and Multi-Omics Profiling:

- From a single aliquot of each plasma sample, perform parallel high-throughput molecular profiling:

- Genomics: Isolate DNA and perform whole-genome or exome sequencing to identify genetic variants.

- Transcriptomics: Isolate RNA from blood cells and perform RNA sequencing (RNA-seq) to quantify gene expression.

- Proteomics: Use mass spectrometry to quantify the levels of thousands of proteins.

- Metabolomics: Use mass spectrometry or NMR to profile small-molecule metabolites.

- From a single aliquot of each plasma sample, perform parallel high-throughput molecular profiling:

- Data Preprocessing and Integration:

- Quality Control: Process raw data from each platform using platform-specific pipelines (e.g., alignment for sequencing, peak identification for mass spec) to generate quantitative matrices.

- Normalization: Normalize data within each platform to correct for technical variance.

- Data Integration: Use computational methods to combine the different omics datasets into a unified data structure for each sample.

- Machine Learning-Based Biomarker Identification:

- Feature Selection: Apply feature selection algorithms (e.g., LASSO) to the integrated multi-omics data to identify a minimal set of genes, proteins, and metabolites that best predict the clinical outcome (e.g., disease state) [2].

- Model Training: Train a supervised machine learning model (e.g., Random Forest or Support Vector Machine) using the selected features on a training subset of the data [2].

- Model Validation: Test the trained model's performance on a held-out validation cohort to assess its predictive accuracy and generalizability.

- Systems-Level Validation (Optional): Place the identified biomarker signature into the context of known biological pathways (e.g., KEGG, Reactome) using pathway enrichment analysis to interpret the functional relevance of the findings.

Visualizing the Workflows

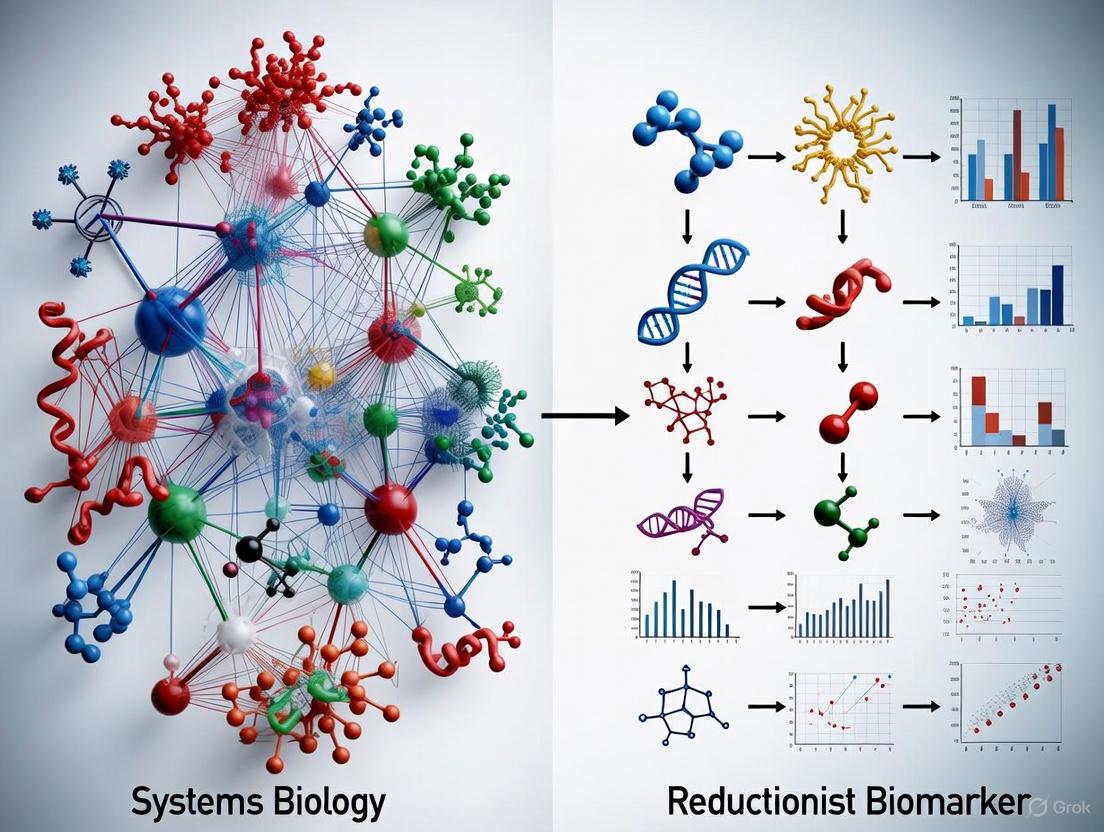

The fundamental difference in logic and workflow between the two paradigms can be visualized as a linear path versus an integrative network.

Reductionist Research Workflow

Systems Biology Research Workflow

The Scientist's Toolkit: Essential Research Reagents

The execution of these experimental protocols relies on a specific set of reagents and platforms. The following table details key solutions for both methodological paths.

Table 2: Essential Research Reagent Solutions for Biomarker Discovery

| Reagent / Platform | Function | Commonly Used In |

|---|---|---|

| ELISA Kits | Quantifies the concentration of a specific target protein in a solution using enzyme-linked antibodies. | Reductionist Approach [5] |

| PCR & qRT-PCR Assays | Amplifies and quantifies specific DNA or RNA sequences from a sample. | Reductionist Approach |

| Next-Generation Sequencing (NGS) | High-throughput technology for determining the sequence of DNA (genomics) or RNA (transcriptomics) [2]. | Systems Biology Approach |

| Mass Spectrometer | High-sensitivity instrument that identifies and quantifies proteins (proteomics) and metabolites (metabolomics) in a sample [1] [2]. | Systems Biology Approach |

| Spatial Biology Platforms | Enables in-situ analysis of gene expression (spatial transcriptomics) and protein multiplexing, preserving the tissue's spatial architecture [6] [3]. | Systems Biology Approach |

| AI/ML Software (e.g., R, Python scikit-learn) | Provides algorithms for integrating multi-omics data, performing feature selection, and training predictive models [2] [7]. | Systems Biology Approach |

| Human Organoids | 3D cell cultures that mimic human tissue architecture and function, used for functional validation of biomarkers in a human-relevant context [3]. | Both (Advanced Validation) |

The field of biomarker discovery has been fundamentally shaped by a reductionist approach that dominated biological research for decades. This paradigm operates on the principle that complex biological systems are best understood by breaking them down into their constituent parts and studying each component in isolation. In the context of biomarkers, this translated to a research model focused on identifying single, discrete biological indicators—a "one mutation, one target, one test" methodology [6]. This single-target framework produced remarkable successes, particularly in the late 20th century, establishing biomarkers as valuable tools for understanding disease mechanisms, identifying drug targets, and monitoring therapeutic responses [7].

The historical preference for single-target discovery was not merely philosophical but largely technology-driven. Research teams were constrained by the tools available: low-throughput assays, limited computational power, and biochemical methods that excelled at measuring individual analytes rather than complex molecular networks. These methods included PCR for specific genetic mutations, ELISA for individual protein biomarkers, and immunohistochemistry for protein expression patterns in tissues [8] [3]. The success of this approach is evidenced by foundational biomarkers such as HER2 for breast cancer stratification and PSA for prostate cancer detection, which revolutionized diagnostic and treatment paradigms in their respective fields [9].

However, as biomedical research has advanced, the inherent limitations of this single-target approach have become increasingly apparent. Complex diseases like cancer, autoimmune disorders, and neurological conditions seldom arise from dysfunction in a single biological pathway but rather emerge from dysregulated networks of molecular interactions [10] [11]. This recognition, coupled with technological advances enabling measurement of thousands of molecular features simultaneously, has prompted a fundamental shift toward systems biology approaches that embrace rather than reduce biological complexity [8] [10].

Historical Successes of Single-Target Biomarker Discovery

Foundational Discoveries and Clinical Impact

The single-target biomarker approach has yielded numerous critical discoveries that formed the foundation of modern diagnostic medicine. These biomarkers provided the first objective measures for disease detection, risk stratification, and treatment monitoring, moving medical practice beyond reliance on subjective symptoms alone. The most impactful successes came from oncology, where biomarkers like carcinoembryonic antigen (CEA) and alpha-fetoprotein (AFP) established in the 1970s provided the first measurable indicators of tumor presence and burden [9]. These discoveries demonstrated that molecular signatures could offer clinically valuable information about disease state, paving the way for more personalized approaches to cancer management.

The paradigm further evolved with the development of predictive biomarkers that could forecast response to specific therapies. The landmark discovery of HER2 overexpression in a subset of breast cancers and its correlation with dramatic response to HER2-targeted therapies like trastuzumab exemplified the power of single-target biomarkers to guide therapeutic decisions [9]. This "one drug, one biomarker" model became the gold standard for drug development in oncology and beyond, enabling more precise targeting of treatments to patients most likely to benefit. Similarly, EGFR mutations in lung cancer became crucial predictors of response to tyrosine kinase inhibitors, transforming treatment outcomes for specific molecular subsets of patients [9].

Methodological Contributions and Diagnostic Frameworks

The single-target approach established essential methodological frameworks that continue to underpin biomarker research. It developed standardized assay validation protocols, reference standards, and analytical performance metrics that ensured reliability and reproducibility in clinical measurements [7]. The rigorous validation pathways established for these biomarkers created templates for regulatory approval processes, with clear evidence requirements for analytical validity, clinical validity, and clinical utility [6].

The technological legacy of this era is equally significant. Single-target discovery drove innovations in assay sensitivity, specificity, and reproducibility across various testing platforms. It established core laboratory methodologies including PCR-based genotyping, immunoassay development, and chromatographic techniques for measuring small molecules [8]. These technical advances created the foundation upon which modern multiplexed assays would later be built. The clinical diagnostic paradigms established through single-target biomarkers—including companion diagnostics, laboratory-developed tests, and standardized reporting frameworks—created the infrastructure necessary for integrating molecular information into routine clinical decision-making [7] [9].

Table 1: Historic Single-Target Biomarkers and Their Clinical Impact

| Biomarker | Disease Context | Clinical Application | Impact |

|---|---|---|---|

| HER2 | Breast Cancer | Predicts response to trastuzumab and other HER2-targeted therapies | Established paradigm for targeted therapy in molecularly-defined subsets |

| EGFR mutations | Non-Small Cell Lung Cancer | Predicts response to EGFR tyrosine kinase inhibitors | Transformed treatment landscape for lung cancer, improving outcomes in molecularly selected patients |

| BRCA1/2 mutations | Hereditary Breast and Ovarian Cancer | Risk assessment and prevention strategies | Enabled prophylactic interventions and personalized screening protocols |

| PD-L1 expression | Multiple Cancers | Guides immunotherapy decisions | Identifies patients most likely to benefit from immune checkpoint inhibitors, though with limitations |

| KRAS mutations | Colorectal Cancer | Predicts resistance to anti-EGFR therapy | Prevents ineffective treatments and spares patients from unnecessary toxicity |

Limitations of the Single-Target Approach

Biological Complexity and Disease Heterogeneity

The fundamental limitation of single-target biomarker discovery lies in its inability to capture the multidimensional nature of most disease processes. Complex diseases arise from dysregulated networks of molecular interactions rather than isolated defects in single pathways [10] [11]. This biological reality means that measuring individual components often provides an incomplete picture of disease pathogenesis, progression, or therapeutic responsiveness. The reductionist approach inherently oversimplifies diseases that are themselves complex adaptive systems with emergent properties not predictable from individual components [10].

This limitation manifests clinically as inconsistent predictive value across diverse patient populations. For example, while PD-L1 expression helps guide immunotherapy decisions, response rates vary significantly even among patients with high PD-L1 expression, indicating that this single parameter cannot fully capture the complexity of tumor-immune interactions [9]. Similarly, the heterogeneity of tumors means that biopsies from different regions of the same tumor may show different biomarker expression patterns, leading to sampling errors and false negatives when relying on single-target measurements [3]. Spatial biology techniques have revealed that biomarker distribution patterns within tissues often carry crucial clinical information that is lost when simply measuring presence or absence [3].

Methodological and Technological Constraints

The single-target approach suffers from several methodological limitations that restrict its clinical utility. The "one biomarker at a time" discovery process is inherently inefficient, requiring separate development and validation pathways for each candidate biomarker [12]. This linear model significantly delays the translation of discoveries into clinical practice and contributes to the high failure rate of biomarker candidates, with only 0-2 new protein biomarkers achieving FDA approval per year across all diseases [12].

The statistical challenges are equally formidable. Single-target biomarkers often demonstrate inadequate sensitivity or specificity when applied broadly, leading to both false positives and false negatives with significant clinical consequences [12]. The "small n, large p" problem—where the number of potential features (genes, proteins, etc.) far exceeds the number of patient samples—makes it statistically difficult to identify truly meaningful signals without sophisticated multivariate analytical approaches [12]. Furthermore, the snapshot nature of most single-target measurements fails to capture the dynamic nature of disease processes and treatment responses, providing limited information about disease trajectory or evolving therapeutic resistance [12] [13].

Table 2: Limitations of Single-Target Biomarker Approaches

| Limitation Category | Specific Challenges | Clinical Consequences |

|---|---|---|

| Biological Complexity | Inability to capture pathway interactions and network dynamics | Incomplete understanding of disease mechanisms and compensatory pathways |

| Disease Heterogeneity | Tumor heterogeneity and spatial variation in biomarker expression | Sampling errors, false negatives, and incomplete prognostic information |

| Analytical Performance | Inadequate sensitivity/specificity for complex diseases | Misdiagnosis, missed diagnoses, and incorrect treatment assignments |

| Technological Constraints | Static measurements that miss dynamic disease processes | Inability to monitor real-time treatment response and evolving resistance mechanisms |

| Statistical Challenges | High false discovery rates with multiple hypothesis testing | Many biomarker candidates fail validation, wasting resources and delaying progress |

The Systems Biology Alternative: A Comparative Framework

Philosophical and Methodological Differences

Systems biology represents a paradigm shift from the reductionist approach, founded on the principle that biological systems must be understood as integrated networks rather than collections of isolated components [10]. Where reductionism seeks to simplify complexity by studying parts in isolation, systems biology embraces complexity by examining interactions and emergent properties of whole systems [10] [11]. This philosophical difference manifests methodologically through the use of high-throughput technologies, computational modeling, and network analysis to capture the multidimensional nature of biological processes [10].

The contrast between these approaches is evident in their respective workflows. While single-target discovery follows a linear path from hypothesis to validation of individual candidates, systems biology employs iterative cycles of computational modeling and experimental validation that continuously refine understanding of the entire system [10]. Rather than testing predefined hypotheses about specific molecules, systems approaches often begin with agnostic data collection across multiple biological layers (genomics, transcriptomics, proteomics, etc.), using computational methods to identify patterns that emerge from the data itself [8] [9]. This data-driven discovery process can reveal novel relationships that would not have been hypothesized through traditional reductionist frameworks.

Technological and Analytical Advancements

The systems approach is enabled by technological advances that allow comprehensive molecular profiling at multiple levels. Multi-omics platforms simultaneously capture data from genomics, transcriptomics, proteomics, and metabolomics, providing a layered view of biological systems that captures their inherent complexity [8] [6] [13]. Spatial biology techniques preserve the architectural context of biomarkers within tissues, revealing how cellular organization and proximity influences function—information completely lost in single-target approaches that homogenize tissues [3]. Single-cell analysis technologies resolve cellular heterogeneity that is averaged out in bulk measurements, identifying rare cell populations that may drive disease progression or treatment resistance [13].

The analytical framework of systems biology represents an equally significant advancement. Network analysis using tools like Cytoscape maps molecular interactions to identify key regulatory nodes and pathways [10] [11]. Artificial intelligence and machine learning algorithms detect complex, non-linear patterns in high-dimensional data that escape conventional statistical methods [8] [7] [9]. These computational approaches can integrate multimodal data—combining molecular profiles with clinical information, medical images, and real-world evidence—to generate more comprehensive biomarkers that better reflect biological reality [7] [9].

Diagram 1: Comparison of reductionist and systems biology approaches to biomarker discovery shows fundamental differences in process flow and philosophy.

Comparative Experimental Data: Single-Target vs. Systems Approaches

Direct Methodological Comparisons

The contrast between single-target and systems approaches becomes evident when examining their application to specific disease contexts. In inflammatory bowel disease (IBD), traditional single-target studies focused on individual cytokines (e.g., TNF, IL6) or genetic variants (e.g., NOD2) provided limited insights into the complex pathophysiology distinguishing Crohn's disease from ulcerative colitis [11]. When researchers applied a systems biology approach—constructing causal biological network models that integrated multiple signaling pathways—they identified distinct network perturbation patterns between these related conditions [11]. The systems model revealed that in the "intestinal permeability" network, programmed cell death factors were downregulated in Crohn's disease but upregulated in ulcerative colitis, while in the "wound healing" network, pro-healing factors showed opposite regulation patterns between the two diseases [11].

Similar advantages emerge in oncology. While single-target biomarkers like HER2 or EGFR mutations provide valuable but limited information, AI-powered analysis of multi-omics data can identify composite biomarker signatures with superior predictive power [7] [9]. For example, in colorectal cancer, deep learning analysis of standard histopathology images identified prognostic patterns that outperformed established molecular and morphological markers [7]. These systems-level biomarkers capture the complex interactions between tumor cells, immune infiltrates, and stromal components that single-target approaches cannot represent [3] [9].

Performance Metrics and Validation Outcomes

Quantitative comparisons demonstrate the enhanced performance of systems approaches across multiple metrics. Single-target biomarkers typically show moderate accuracy (often 70-80% sensitivity/specificity) for complex endpoints, reflecting their inherent limitation of reducing multidimensional biology to univariate measurements [12] [9]. In contrast, multimodal AI biomarkers that integrate genomic, imaging, and clinical data have demonstrated 15% improvement in survival risk prediction in phase 3 clinical trials compared to traditional approaches [9].

The validation outcomes further highlight these differences. The development pathway for single-target biomarkers is characterized by high attrition rates, with the "verification tar pit" consuming up to $2 million and over a year per candidate, often ending in failure [12]. Systems approaches that identify biomarker panels or signatures face different validation challenges but demonstrate better generalizability across diverse populations when properly developed [8] [12]. The validation of single-target biomarkers typically requires thousands of samples to achieve adequate statistical power, while systems approaches using machine learning may require even larger datasets but can extract more information from each sample [12] [9].

Table 3: Quantitative Comparison of Single-Target vs. Systems Biology Approaches

| Performance Metric | Single-Target Approach | Systems Biology Approach |

|---|---|---|

| Development Timeline | Years for single candidates | Months for signature discovery |

| Attrition Rate | Very high (>95% failure) | High but with more validated outputs per study |

| Predictive Accuracy for Complex Diseases | Moderate (typically 70-80% AUC) | Higher (typically 80-90% AUC for best validated models) |

| Biological Coverage | Narrow (single pathway) | Comprehensive (multiple interacting pathways) |

| Handling of Heterogeneity | Poor (misses spatial and temporal variation) | Better (can incorporate spatial context and dynamics) |

| Clinical Implementation | Simpler regulatory path | More complex validation requirements |

| Cost per Candidate | Up to $2M verification cost | Higher initial investment but more information per study |

The Scientist's Toolkit: Essential Research Reagents and Platforms

Core Technologies for Biomarker Discovery

Transitioning from single-target to systems biomarker discovery requires both conceptual shifts and adoption of new technological platforms. The modern biomarker discovery toolkit encompasses technologies that enable comprehensive molecular profiling, spatial contextualization, and computational integration of diverse data types [6] [3]. Multi-omics profiling platforms form the foundation, with next-generation sequencing providing genomic and transcriptomic data, mass spectrometry enabling proteomic and metabolomic measurements, and emerging technologies like spatial transcriptomics capturing molecular information within architectural context [6] [3]. For example, Element Biosciences' AVITI24 system combines sequencing with cell profiling to simultaneously capture RNA, protein, and morphological data, while 10x Genomics platforms enable millions of cells to be analyzed at once [6].

Advanced model systems constitute another critical component of the modern toolkit. Organoid cultures recapitulate the complex architecture and functions of human tissues more faithfully than traditional 2D cell lines, making them valuable for functional biomarker screening and target validation [3]. Humanized mouse models incorporate human immune system components, enabling studies of human-specific tumor-immune interactions and immunotherapy response biomarkers [3]. When used in conjunction with multi-omics technologies, these advanced models enhance the translational relevance of biomarker discoveries by better mimicking human biology and disease processes [3].

The computational infrastructure for systems biomarker discovery represents perhaps the most significant departure from traditional approaches. AI and machine learning platforms are essential for analyzing the high-dimensional data generated by multi-omics technologies [7] [9]. These include deep learning algorithms for pattern recognition in complex datasets, natural language processing for extracting insights from clinical narratives, and explainable AI methods that make computational predictions interpretable to clinicians [7] [9]. Open-source resources like the Digital Biomarker Discovery Pipeline (DBDP) provide standardized toolkits and reference methods that promote reproducibility and collaboration [12].

Data management and integration systems form the backbone of modern biomarker discovery operations. Federated learning approaches enable analysis across distributed datasets without moving sensitive patient data, addressing privacy concerns while maximizing available information [9]. Cloud computing platforms provide the scalable computational resources needed for large-scale multi-omics analyses, while laboratory information management systems (LIMS) and electronic data capture systems maintain sample integrity and data quality throughout the discovery pipeline [6] [12]. Together, these technologies create an integrated ecosystem that supports the complex, data-intensive workflow of systems biomarker discovery from initial measurement through clinical validation.

Diagram 2: Modern systems biology workflow for biomarker discovery integrates multiple data types and emphasizes computational analysis.

Table 4: Essential Research Reagent Solutions for Modern Biomarker Discovery

| Technology Category | Specific Tools/Platforms | Primary Function | Key Applications |

|---|---|---|---|

| Multi-Omics Profiling | Next-generation sequencing, Mass spectrometry, Microarrays | Comprehensive molecular measurement across biological layers | Biomarker identification, Pathway analysis, Molecular subtyping |

| Spatial Biology | Multiplex immunohistochemistry, Spatial transcriptomics, Imaging mass cytometry | Preserve architectural context of biomarkers within tissues | Tumor microenvironment characterization, Cellular interaction mapping |

| Single-Cell Technologies | Single-cell RNA sequencing, CyTOF, Cellular indexing | Resolve cellular heterogeneity masked in bulk measurements | Rare cell population identification, Cellular trajectory reconstruction |

| Advanced Model Systems | Organoids, Humanized mouse models, 3D culture systems | Better mimic human biology and disease processes | Functional biomarker validation, Therapeutic response prediction |

| Computational Platforms | AI/ML algorithms, Network analysis tools, Cloud computing | Analyze high-dimensional data and identify complex patterns | Predictive model development, Biomarker signature discovery |

The historical context of single-target biomarker discovery reveals both remarkable achievements and inherent limitations. The reductionist approach produced foundational biomarkers that transformed diagnostic and therapeutic paradigms in multiple disease areas, particularly oncology, while establishing methodological standards and regulatory pathways that continue to guide biomarker development [7] [9]. Its limitations in addressing complex, multifactorial diseases reflect not scientific failure but rather the boundary of what was technologically and conceptually possible during its ascendancy [10].

The ongoing shift toward systems biology does not render single-target approaches obsolete but rather recontextualizes them within a more comprehensive framework [8] [10]. Single-target biomarkers continue to provide clinical value in specific contexts where diseases are driven by discrete molecular events. However, for most complex diseases, the future lies in integrated approaches that combine the methodological rigor of reductionism with the comprehensive perspective of systems biology [11] [9]. This synthesis leverages technological advances in multi-omics profiling, spatial biology, and computational analysis to develop biomarker signatures that better reflect the multidimensional nature of health and disease [6] [13].

The most productive path forward recognizes that these approaches are complementary rather than contradictory. Single-target biomarkers provide focused insights with clear clinical actionability, while systems approaches capture the complexity that single targets miss [10] [9]. The future of biomarker discovery lies not in choosing between these paradigms but in developing frameworks that integrate their respective strengths, leveraging historical wisdom while embracing technological innovation to advance personalized medicine [8] [13].

Systems biology represents a fundamental paradigm shift in biological research, moving from the traditional reductionist approach to a holistic perspective that seeks to understand how biological components interact to form functional systems. Where reductionism focuses on isolating and studying individual biological parts—single genes, proteins, or pathways—systems biology investigates the complex networks of interactions that give rise to emergent behaviors not predictable from individual components alone [14] [15]. This philosophical shift began in the early 20th century as scientists recognized the limitations of purely mechanistic approaches that interpreted organisms as simple clockwork-like machines [14].

The foundational revolution in systems thinking accelerated with Roger Williams' groundbreaking 1956 work, which compiled extensive evidence of molecular, physiological, and anatomical individuality in animals [14]. Williams demonstrated that normal, healthy individuals exhibit enormous variation—often 20 to 50-fold differences in biochemical, hormonal, and physiological parameters—revealing that the "average individual" is a statistical abstraction rather than a biological reality [14]. This evidence directly contradicted strict mechanistic views and revealed that living systems possess robust compensation mechanisms that maintain function despite significant molecular variation, a core systems property [14].

Table 1: Fundamental Contrasts Between Reductionist and Systems Biology Approaches

| Aspect | Reductionist Approach | Systems Biology Approach |

|---|---|---|

| Primary Focus | Isolated components | Networks and interactions |

| Core Philosophy | Breaking down systems into constituent parts | Understanding emergence from system interactions |

| Methodology | Studies elements in isolation | Studies systems as integrated wholes |

| Variability Treatment | Often considered noise | Recognized as biologically significant |

| Modeling Approach | Linear causality | Nonlinear, dynamic networks |

| Experimental Design | Controlled, single-variable | Multi-parameter, high-throughput |

Core Principles of Systems Biology

Holism and Emergent Properties

The principle of holism constitutes the foundational tenet of systems biology, positing that "the whole is something over and above its parts and not just the sum of them all" [14]. This Aristotelian concept, revitalized in modern systems science, emphasizes that biological systems exhibit emergent properties—unique characteristics possessed only by the whole system and not shared to any great degree by individual components in isolation [14] [15]. These emergent behaviors arise from the complex, dynamic interactions between system components and cannot be predicted by studying individual elements alone [16].

Living systems are characterized by their hierarchical organization, with systems nested within systems across multiple scales of complexity [14]. This hierarchical structure ranges from molecular networks and cellular systems to tissues, organs, organisms, and ecosystems. At each level, new properties emerge that are not present at lower levels, requiring specific approaches to study and understand these system-level behaviors [14]. The systems perspective recognizes that the structure of an entire system actually orchestrates and constrains the behavior of its component parts, creating downward causation effects that reductionist approaches cannot capture [14].

Networks and Interconnectivity

Biological networks represent the architectural framework through which emergent properties manifest in living systems. Systems biology represents biological relationships as interconnected networks where nodes symbolize system components (genes, proteins, metabolites) and connecting links represent interactions or reactions [10]. These networks can be constructed through various approaches: (1) de novo from direct experimental interactions; (2) by applying known interactions to experimental data using specialized software; or (3) through reverse engineering approaches that infer network structures from system behavior [10].

The interconnectivity within biological networks means that changes to one component inevitably influence others, often through complex feedback loops that can be either positive (amplifying changes) or negative (stabilizing systems) [16]. This network perspective reveals that biological functions are rarely regulated by single molecules but rather emerge from the coordinated interactions of multiple system components [10]. Understanding the network topology—the specific patterns of connections—becomes essential for identifying key regulatory points and understanding system dynamics and robustness [17] [16].

Diagram 1: Conceptual Framework of Systems Biology

Integration of Multi-Scale Data

Integration represents the methodological cornerstone of systems biology, enabling the synthesis of information across multiple biological levels and scales [15] [16]. This integrative approach combines diverse data types—genomic, transcriptomic, proteomic, metabolomic, and clinical—to construct comprehensive models of biological systems [17] [15]. The emergence of multi-omics technologies has transformed systems biology by providing extensive datasets that cover different biological layers, enabling a more profound comprehension of biological processes and interactions [15].

The integration process follows a cyclical framework of theory, computational modeling, hypothesis generation, experimental validation, and model refinement [15]. This iterative cycle accelerates discovery and enhances the reliability of predictions [18]. Successful integration requires sophisticated computational tools and methods for data integration and mining, including network analysis, machine learning, and pathway enrichment approaches [15] [16]. These methodologies enable researchers to extract meaningful patterns and insights from integrated datasets, moving beyond simple correlation to establish causal relationships within biological systems [10] [11].

Methodological Framework: The Systems Biology Toolkit

Computational and Modeling Approaches

Systems biology employs both top-down and bottom-up modeling strategies to understand biological complexity [15]. The top-down approach begins with system-level observational data, typically from high-throughput 'omics' technologies, and works downward to identify molecular interaction networks and generate hypotheses about regulatory mechanisms [15]. In contrast, the bottom-up approach starts from detailed mechanistic knowledge of individual components and their interactions, building upward to reconstruct system behavior from first principles [15].

Table 2: Computational Modeling Methods in Systems Biology

| Model Type | Key Features | Typical Applications |

|---|---|---|

| Ordinary Differential Equations (ODE) | Captures continuous dynamics of molecular interactions | Signaling pathways, metabolic networks |

| Boolean Networks | Simplified logical (ON/OFF) representation of component states | Gene regulatory networks, cellular fate decisions |

| Agent-Based Models | Simulates behaviors of individual entities and their interactions | Cellular populations, tissue organization |

| Network Models | Graph-based representation of component relationships | Protein-protein interaction maps, disease mechanism analysis |

| Multi-Scale Models | Integrates processes across different temporal and spatial scales | Organ-level physiology, host-pathogen interactions |

The bottom-up approach is particularly valuable in pharmaceutical applications, as it facilitates the translation of drug-specific in vitro findings to the in vivo human context [15]. This includes predicting drug exposure through physiologically based pharmacokinetic (PBPK) modeling and translating in vitro data on drug-ion channel interactions to physiological effects [15]. The separation of drug-specific, system-specific, and trial design parameters enables predictions of exposure-response relationships that account for inter- and intra-individual variability, making this approach particularly valuable for population-level drug effect assessments [15].

Experimental and Analytical Technologies

Modern systems biology relies on high-throughput technologies that enable the simultaneous measurement of thousands of system components [15] [16]. These technologies include next-generation sequencing for genomic characterization, mass spectrometry for proteomic and metabolomic profiling, and advanced imaging techniques for spatial and temporal analysis of biological systems [16]. The massive datasets generated by these technologies necessitate sophisticated computational infrastructure and bioinformatic tools for data management, processing, and analysis [10].

Network analysis represents a core analytical approach in systems biology, leveraging mathematical tools from Graph Theory to identify key regulatory nodes, network motifs, and functional modules within biological systems [10]. Software platforms like Cytoscape provide versatile environments for complex network visualization and analysis [10] [11]. The emerging integration of machine learning and artificial intelligence approaches further enhances the ability to detect hidden patterns in multi-omics data and predict system behaviors under different conditions [19] [18].

Diagram 2: Systems Biology Research Workflow

Comparative Analysis: Systems Biology vs. Reductionist Biomarker Approaches

Philosophical and Methodological Differences

The fundamental distinction between systems biology and reductionist biomarker approaches lies in their treatment of biological complexity. While reductionist methods typically seek to minimize complexity through controlled experiments that isolate single variables, systems biology embraces complexity by simultaneously measuring multiple system components and analyzing their interactions [15]. Reductionist approaches have proven highly successful in identifying individual biological components and their specific functions but offer limited capacity for understanding how system properties emerge from interactions [15].

Reductionist biomarker strategies typically focus on identifying single molecules or linear pathways as diagnostic or therapeutic indicators [10]. In contrast, systems biology recognizes that most biological features are determined by complex interactions among multiple system components, and therefore focuses on identifying biomodules—groups of interacting molecules that regulate discrete functions—and their interrelationships within larger networks [10]. This network perspective enables a more comprehensive understanding of disease mechanisms and treatment responses that cannot be captured by single biomarkers alone.

Practical Applications in Drug Development

The application of systems biology in pharmaceutical research has demonstrated significant advantages over traditional reductionist approaches, particularly for complex diseases involving multiple interacting pathways [11] [18]. Quantitative Systems Pharmacology (QSP) has emerged as a powerful application of systems biology in drug development, leveraging comprehensive biological models to simulate drug behaviors, predict patient responses, and optimize development strategies [20]. QSP approaches enable more informed decisions in drug discovery, potentially reducing development costs and bringing safer, more effective therapies to patients faster [20].

Table 3: Comparison of Applications in Inflammatory Bowel Disease Research

| Research Aspect | Reductionist Biomarker Approach | Systems Biology Approach |

|---|---|---|

| Barrier Function Analysis | Focuses on single tight junction proteins | Models integrated programmed cell death and tight junction networks |

| Inflammatory Response | Measures individual cytokines (e.g., TNF, IL6) | Captures PPARG, IL6, and IFN pathway interactions |

| Disease Differentiation | Relies on single discriminatory markers | Identifies distinct network perturbation patterns for CD vs. UC |

| Therapeutic Targeting | Targets single pathways | Identifies central network nodes and combination strategies |

| Personalization | Limited by single-molecule variability | Accounts for compensatory mechanisms within networks |

A concrete example of the systems approach can be found in Inflammatory Bowel Disease (IBD) research, where causal biological network models have been developed to represent signaling pathways contributing to Crohn's disease and ulcerative colitis [11]. These models integrate scientific knowledge using Biological Expression Language (BEL) to create computable network models that capture complex relationships between biological entities [11]. When scored with transcriptomic data from diseased tissues, these network models reveal distinct perturbation patterns between different IBD forms, providing mechanistic insights that single biomarker approaches cannot deliver [11].

Case Study: Network Analysis in Inflammatory Bowel Disease

Experimental Protocol and Workflow

The systems biology approach to IBD research exemplifies the power of network-based analysis for understanding complex disease mechanisms [11]. The research follows a structured workflow beginning with comprehensive literature curation to identify known signaling pathways involved in barrier defence, inflammatory processes, and wound healing in IBD [11]. This knowledge is formalized using Biological Expression Language (BEL), which converts relationships between biomolecules into cause-and-effect statements using controlled vocabularies that facilitate computational analysis [11].

Each BEL statement consists of a source, relationship, and target, where biological entities are defined by specific functions (RNA abundances, protein abundances, protein activities, etc.) and referenced using standard namespaces [11]. Contextual details including species, cell type, and disease state are captured as annotations with each statement [11]. The curated BEL statements are then compiled into network models using the OpenBEL framework and reviewed using Cytoscape to identify gaps and ensure completeness [11]. These computable network models enable quantitative analysis of transcriptomic data from diseased tissues, providing insights into network perturbations associated with specific disease states [11].

Key Findings and Comparative Insights

Application of this systems biology approach to IBD revealed distinct network perturbation patterns that differentiate Crohn's disease from ulcerative colitis [11]. In the "intestinal permeability" model, programmed cell death factors were downregulated in Crohn's disease but upregulated in ulcerative colitis [11]. The "inflammation" model highlighted PPARG, IL6, and IFN-associated pathways as prominent regulatory factors in both diseases, but with distinct interaction patterns [11]. Most strikingly, in the "wound healing" model, factors promoting wound healing were upregulated in Crohn's disease but downregulated in ulcerative colitis, providing mechanistic insights into their different clinical presentations and progression patterns [11].

These findings demonstrate how systems biology approaches can capture complex, multidimensional differences between related disease states that reductionist biomarker approaches typically miss. By analyzing network-wide perturbation patterns rather than individual molecule changes, systems biology provides a more comprehensive understanding of disease mechanisms and potential therapeutic interventions [11].

Essential Research Reagents and Computational Tools

The implementation of systems biology research requires specialized reagents and computational resources that enable comprehensive system characterization and modeling. The following table details key solutions essential for conducting systems biology investigations, particularly those focused on network analysis and multi-omics integration.

Table 4: Essential Research Reagent Solutions for Systems Biology

| Reagent/Tool | Primary Function | Application Example |

|---|---|---|

| OpenBEL Framework | Compiles biological relationships into computable network models | Formalizing causal relationships in IBD pathway models [11] |

| Cytoscape | Network visualization and analysis | Reviewing and analyzing biological network models [10] [11] |

| Ingenuity Pathway Analysis | Known interaction mapping from experimental data | Building biological networks from gene lists [10] |

| String Database | Protein-protein interaction data source | Constructing interaction networks from proteomic data [10] |

| Multi-omics Platforms | Simultaneous measurement of multiple biological layers | Integrating genomic, transcriptomic, proteomic data [15] [16] |

| High-Throughput Sequencers | Comprehensive molecular profiling | Generating genome-wide transcriptomic data [16] |

| Mass Spectrometers | Proteomic and metabolomic characterization | Quantitative measurement of protein abundances [10] |

Systems biology represents more than just a collection of computational techniques—it constitutes a fundamental philosophical shift in how we approach biological complexity [14] [15]. By focusing on networks, emergent properties, and integration, systems biology provides a powerful framework for understanding biological systems in their full complexity, overcoming limitations of traditional reductionist approaches that necessarily isolate components from their physiological context [15]. The core tenets of systems biology—holism, interconnectivity, emergence, and dynamic integration—provide a more accurate representation of biological reality, where function arises from the coordinated interactions of multiple components across different scales of organization [14] [16].

The comparative analysis between systems biology and reductionist biomarker approaches reveals that these perspectives are not mutually exclusive but rather complementary [14]. Reductionist approaches excel at identifying components and their specific functions, while systems biology explains why these components are organized as they are and how their interactions give rise to system-level behaviors [14]. The most powerful research strategies integrate both approaches, using reductionist methods to characterize individual components and systems approaches to understand their functional integration [14].

As systems biology continues to evolve, its impact on therapeutic innovation and personalized medicine continues to grow [20] [18]. By providing holistic insights into disease mechanisms and guiding rational intervention strategies, systems biology represents an essential tool for advancing the next generation of therapies [18]. It bridges the critical gap between data generation and clinical decision-making, ensuring that the vast amounts of biological information generated by modern technologies are translated into meaningful therapeutic outcomes for patients [18]. The continued development of educational programs [20] and collaborative industry-academia partnerships [20] will be essential for training the next generation of scientists capable of leveraging these powerful approaches to address the complex biological challenges of the future.

For the past half-century, epidemiology and disease research have been dominated by a reductionist paradigm focused on isolating single causes of disease states [21]. This approach, rooted in Koch's postulates and the "one-gene/one-enzyme/one-function" concept, has successfully identified numerous causal relationships, such as smoking with lung cancer and asbestos with mesothelioma [21] [22] [23]. However, the growing recognition that factors at multiple biological levels—from genes and proteins to behavioral patterns and social determinants—influence health and disease has challenged this dominant epidemiological paradigm [21]. Complex chronic diseases such as diabetes, cancer, and Alzheimer's disease rarely follow simple linear causality but instead emerge from intricate networks of interacting elements characterized by dynamic feedback loops, reciprocal relations, and non-linear interactions [22] [23] [24]. This article objectively compares these competing philosophies—linear causality versus complex network interactions—examining their foundational principles, methodological approaches, and applications in drug development and precision medicine.

The limitations of reductionist approaches become evident when considering diseases like obesity, where causative factors span endogenous elements (genes, epigenetic factors), individual-level behaviors (diet, exercise), neighborhood-level influences (food availability, walking environment), and even national-level policies (agricultural support, food programs) [21]. Similarly, Alzheimer's disease manifests with highly variable presentation influenced by genetic inheritance, age at onset, sex differences, environmental exposures, and polygenic risk scores, making simple linear models inadequate for capturing its complexity [24]. This recognition has catalyzed a methodological shift toward complex systems dynamic computational models that can better represent the multiscale, interactive nature of disease pathogenesis [21] [22].

Conceptual Foundations: Core Principles and Philosophical Frameworks

Linear Causality Model

The linear causality model, rooted in 19th-century germ theory and Koch's postulates, operates on the fundamental principle that specific, isolatable agents cause corresponding diseases [22]. This reductionist approach seeks to isolate independent factors that directly cause disease states, using conceptual frameworks such as the sufficient-component causal model and counterfactual paradigm to establish causation [21]. The methodology predominantly employs regression-based models—including multivariable and multilevel regression—that assess relationships between "independent" variables and disease outcomes while controlling for potential confounders [21] [22]. This paradigm conceptualizes diseases as having singular, actionable causes and forms the philosophical foundation for much of contemporary evidence-based medicine, particularly in establishing causal relationships between risk factors and diseases [21].

Complex Network Interaction Model

The complex network interaction model conceptualizes diseases as emergent properties of perturbed biological systems rather than isolated malfunctions [23] [25]. This framework recognizes that cellular networks operate through specific laws and principles, and that phenotypes result from perturbations to these interconnected systems [23]. The approach utilizes interactome networks—simplified representations of cellular systems as nodes (biological components) and edges (interactions between them)—to model disease pathogenesis [23] [26]. Methodologically, it employs computational approaches such as agent-based modeling, network diffusion algorithms, and machine learning applied to multiscale data [21] [27] [26]. This philosophy fundamentally challenges linear causality by acknowledging reciprocal relationships (where causes and effects influence each other), dynamic feedback loops, and the absence of predictable parametric relations in biological systems [21].

Table 1: Fundamental Principles of Each Approach

| Principle | Linear Causality Model | Complex Network Interaction Model |

|---|---|---|

| Causal Structure | Unidirectional, deterministic | Multidirectional, probabilistic |

| System View | Reductionist, focusing on isolated components | Holistic, focusing on system interactions |

| Disease Emergence | Direct consequence of specific causes | Emergent property of perturbed networks |

| Temporal Dynamics | Static relationships | Dynamic, feedback-driven evolution |

| Intervention Strategy | Target specific causal factors | Modulate network properties |

Visualizing the Conceptual Differences

The following diagram illustrates the fundamental structural differences between linear and network-based disease models:

Methodological Comparison: Analytical Approaches and Techniques

Data Requirements and Experimental Design

Linear approaches primarily rely on controlled experimental designs that isolate variables of interest, with data structures optimized for regression analyses [21]. These methods typically require clearly defined independent and dependent variables, with careful attention to confounding factors [21]. In contrast, network medicine integrates diverse omics datasets—genomics, transcriptomics, proteomics, metabolomics—to construct comprehensive interactome networks that capture the complexity of biological systems [23] [28]. The multiscale interactome approach further incorporates biological functions into protein-protein interaction networks, creating hierarchical networks that span from molecular interactions to organism-level phenotypes [26]. The integration of imaging data with omics datasets represents another advancement, enabling researchers to link brain-level functional and structural changes to molecular-level alterations in neurodegenerative diseases like Alzheimer's [24].

Key Analytical Techniques

Linear methodologies employ regression-based techniques including multivariable regression, logistic regression, and multilevel (hierarchical) models that estimate the effects of specific variables while controlling for others [21]. While these methods are powerful for identifying isolated relationships, they struggle with reciprocal relations between exposures and outcomes, discontinuous relations, and changes in relationships over time [21]. Network-based approaches utilize diverse computational methods including agent-based modeling (simulating individual agents and their interactions) [21], network diffusion profiles (using random walks to model effect propagation) [26], and machine learning algorithms (such as Random Forest and XGBoost) that incorporate network topology and protein features to predict biomarker potential [27].

Table 2: Methodological Approaches and Applications

| Methodology | Primary Techniques | Key Applications | Limitations |

|---|---|---|---|

| Regression-Based Models | Multivariable regression, multilevel modeling | Isolating independent risk factors, controlling for confounders | Poor handling of reciprocal relationships, non-linear dynamics |

| Agent-Based Modeling | Computer simulation of individual agents with defined interaction rules | Modeling population-level emergence from individual interactions, obesity epidemiology | Computational intensity, parameter specification challenges |

| Network Diffusion | Biased random walks on multiscale networks | Predicting drug-disease treatments, identifying therapeutic mechanisms | Network completeness, edge weight optimization |

| Machine Learning Integration | Random Forest, XGBoost on network features | Predictive biomarker identification, cancer signaling analysis | Interpretability challenges, training data requirements |

Experimental Workflow for Network-Based Drug Discovery

The following diagram outlines a generalized experimental workflow for identifying drug treatments using network-based approaches:

Performance Comparison: Quantitative Findings and Experimental Evidence

Predictive Accuracy in Drug-Disease Treatment

A systematic evaluation of the multiscale interactome approach demonstrated significant improvements in predicting drug-disease treatments compared to molecular-scale interactome methods that only consider physical interactions between proteins [26]. The multiscale approach achieved an AUROC of 0.705 versus 0.620 (+13.7%) and average precision of 0.091 versus 0.065 (+40.0%) [26]. This enhanced performance was particularly notable for entire drug classes such as hormones, which rely heavily on biological functions and cannot be accurately represented by approaches considering only physical interactions [26]. The study analyzed nearly 6,000 approved treatments spanning almost every category of human anatomy, exceeding the largest prior network-based study by tenfold [26].

Biomarker Discovery and Validation

Network-based approaches have demonstrated particular utility in identifying predictive biomarkers for targeted cancer therapies. The MarkerPredict framework, which integrates network motifs and protein disorder information, classified 3,670 target-neighbor pairs with 32 different machine learning models achieving 0.7-0.96 leave-one-out-cross-validation accuracy [27]. By defining a Biomarker Probability Score (BPS) as a normalized summative rank of the models, the method identified 2,084 potential predictive biomarkers for targeted cancer therapeutics, with 426 classified as biomarkers by all four calculations [27]. This systematic approach demonstrates how network properties can enhance biomarker discovery beyond linear association studies.

Quantitative Comparison of Methodological Performance

Table 3: Experimental Performance Metrics Across Methodologies

| Performance Metric | Linear Regression Models | Multiscale Network Approach | Improvement |

|---|---|---|---|

| Drug-Disease Prediction AUROC | 0.620 | 0.705 | +13.7% |

| Drug-Disease Prediction Average Precision | 0.065 | 0.091 | +40.0% |

| Recall@50 | 0.264 | 0.347 | +31.4% |

| Biomarker Prediction Accuracy (LOOCV) | N/A | 0.7-0.96 | N/A |

| Therapeutic Coverage | Limited to direct targets | Extensive, including functional matches | Substantial |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementing network approaches requires specialized computational resources and datasets. The following table outlines essential research reagents and their applications in complex disease modeling:

Table 4: Essential Research Reagents for Network Medicine

| Resource Category | Specific Examples | Function/Application |

|---|---|---|

| Protein Interaction Databases | SIGNOR, ReactomeFI, Human Cancer Signaling Network | Provide physical and functional interaction data for network construction |

| Biological Function Annotations | Gene Ontology (GO) terms | Annotate biological processes, molecular functions, and cellular components |

| Biomarker Databases | CIViCmine, DisProt | Provide validated biomarker information for model training and validation |

| ORFeome Collections | Human ORFeome libraries | Enable high-throughput interactome mapping using standardized open reading frames |

| Machine Learning Frameworks | Random Forest, XGBoost | Implement classification of potential biomarkers based on network features |

| Network Analysis Tools | FANMOD, Cytoscape | Identify network motifs and visualize complex biological networks |

Experimental Protocols for Key Methodologies

Multiscale Interactome Construction and Analysis

The multiscale interactome methodology integrates physical interactions between 17,660 human proteins (387,626 edges) with 9,798 biological functions from Gene Ontology (34,777 edges between proteins and biological functions, 22,545 edges between biological functions) [26]. The protocol involves: (1) compiling drug-target interactions (8,568 edges connecting 1,661 drugs to human proteins) and disease-protein associations (25,212 edges connecting 840 diseases to disrupted human proteins); (2) constructing the multiscale network by connecting proteins to biological functions according to established hierarchies; (3) computing diffusion profiles using biased random walks with optimized edge weights (wdrug, wdisease, wprotein, wbiological function, whigher-level biological function, wlower-level biological function); (4) comparing drug and disease diffusion profiles to predict treatments and identify relevant proteins and biological functions [26].

Predictive Biomarker Identification Using Network Motifs

The MarkerPredict protocol for identifying predictive biomarkers in oncology includes: (1) extracting three-nodal network motifs (triangles) from cancer signaling networks using FANMOD; (2) annotating intrinsically disordered proteins (IDPs) using DisProt, AlphaFold (pLLDT<50), and IUPred (score>0.5); (3) creating training sets from literature-curated positive controls (established predictive biomarkers) and negative controls (proteins not in biomarker databases); (4) training Random Forest and XGBoost machine learning models on network topological features and protein disorder annotations; (5) calculating Biomarker Probability Scores (BPS) as normalized summative ranks across models; (6) validating predictions through literature mining and experimental follow-up [27].

Discussion: Clinical Implications and Future Directions

Translation to Precision Medicine

The transition from linear causality to network-based approaches has profound implications for precision medicine. Network medicine provides a systems-level framework for understanding how genetic variants interact with environmental factors to produce disease phenotypes [28] [24]. In Alzheimer's disease research, integrating imaging data with omics datasets has enabled the identification of disease subtypes and the development of more personalized risk assessments [24]. Similarly, in oncology, network-based biomarker discovery approaches like MarkerPredict offer the potential to identify patients who will respond to targeted therapies, sparing others from unnecessary side effects [27]. The multiscale interactome's ability to explain treatment mechanisms even when drugs seem unrelated to the diseases they treat represents a significant advance in pharmacological understanding [26].

Limitations and Methodological Challenges

Despite their promise, network-based approaches face several important limitations. Incomplete interactome maps remain a fundamental challenge, as current networks likely miss important interactions and context-specificities [23] [28]. The sheer complexity of biological systems presents interpretability challenges, particularly when integrating across multiple biological scales [28]. Additionally, network medicine requires sophisticated computational infrastructure and specialized expertise that may not be readily available in all research settings [25] [28]. For linear models, their relative simplicity, established statistical frameworks, and interpretability maintain their utility for many research questions, particularly when investigating specific, well-defined causal pathways [21].

Emerging Innovations and Future Perspectives

The field of network medicine is rapidly evolving with several promising directions. The incorporation of temporal dynamics through longitudinal network analysis could capture disease progression more accurately than static networks [25] [28]. Advanced machine learning methods, particularly deep learning architectures, are being integrated with network approaches to enhance predictive power [27] [28]. Innovative modeling frameworks, including quantum mechanics-based approaches that represent individual health states as quantum superposition states, offer novel ways to capture the uncertainty and heterogeneity inherent in disease processes [29]. The continued development of more comprehensive and context-specific interactome maps will further enhance the resolution and accuracy of network-based disease models [23] [28].

The comparison between linear causality and complex network interactions in disease modeling reveals a nuanced landscape where each approach offers distinct advantages and limitations. Linear models provide conceptual clarity and statistical rigor for investigating specific causal pathways, while network approaches better capture the systemic complexity of multifactorial diseases. Rather than a wholesale replacement of one paradigm by the other, the future of disease research likely lies in their strategic integration—using linear approaches for well-defined causal questions and network methods for understanding system-level dynamics. This complementary use of methodologies, leveraging the respective strengths of each, promises to accelerate progress toward more effective, personalized approaches for understanding, preventing, and treating complex diseases.

The classical reductionist approach in biological research has historically focused on the identification and characterization of isolated components of living organisms. While successful in cataloging individual biological elements, this perspective has proven inadequate for clarifying the complex interaction mechanisms between components and predicting how alterations in single or multiple elements affect entire system dynamics [30]. In contrast, systems biology represents a fundamental shift in perspective, aiming to understand biology at the system level through functional analysis of the structure and dynamics of cells and organisms [30]. This discipline focuses not on isolated components, but on the complex network of interactions between genes, proteins, metabolites, and other biomolecules that collectively give rise to biological function [30].

The emergence of systems biology as a practical discipline has been catalyzed by the data revolution brought about by high-throughput omics technologies. These technologies enable comprehensive, large-scale analysis of diverse biomolecular layers, including the genome, epigenome, transcriptome, and proteome [31]. The ability to simultaneously examine entire systems rather than single genes or proteins has transformed our approach to understanding health and disease, particularly for complex disorders known to be caused by combinations of genetic, environmental, immunological, and neurological factors [30]. This article examines how these technological advances have enabled a systems-level understanding of biology, comparing the performance of different approaches and methodologies that form the foundation of modern biological research.

The Enabling Technologies: A Multi-Layered View of Biology

High-throughput omics technologies have revolutionized biological research by providing unprecedented insights into the complexity of living systems at multiple molecular levels [32]. The integration of data from these complementary technologies provides a more holistic and representative understanding of the complex molecular mechanisms that underpin biology [31].

Table 1: High-Throughput Omics Technologies and Their Applications

| Omics Type | Key Technologies | Biological Focus | Research Applications |

|---|---|---|---|

| Genomics | Next-generation sequencing (NGS) | DNA structure, function, and variation | Identifying genetic mutations, understanding disease genetics [32] [31] |

| Epigenomics | DNA methylation analysis, ChIP-Seq | Modifications of DNA and DNA-associated proteins | Studying gene regulation, understanding epigenetic influences on disease [32] [31] |

| Transcriptomics | RNA sequencing (RNA-Seq) | RNA transcripts and gene expression regulation | Analyzing gene expression changes, understanding regulatory mechanisms [32] [31] |

| Proteomics | Mass spectrometry, affinity-based methods | Protein identification, quantification, and modification | Understanding protein functions, identifying biomarkers and therapeutic targets [32] [31] |

| Metabolomics | NMR spectroscopy, mass spectrometry | Metabolite profiles and metabolic pathways | Identifying metabolic changes, understanding pathways and disease mechanisms [32] |

| Single-cell Omics | Single-cell sequencing | Cellular heterogeneity at multiple molecular levels | Investigating cellular heterogeneity, understanding cell functions in development and disease [32] |

The true power of these technologies emerges through their integration in a multi-omics approach. Studying each molecular layer in isolation can only reveal part of the biological picture, while bringing all these different layers together provides a more complete understanding of human biology and disease [31]. For example, combining genomics and proteomics allows researchers to directly link genotype to phenotype, while integrating transcriptomics and proteomics provides insights into how gene expression affects protein function and phenotypic outcomes [31]. This integrative approach is essential for unraveling the complexity of cellular processes and disease mechanisms [32].

Comparative Analysis: Systems Biology Versus Reductionist Biomarker Approaches

Traditional reductionist approaches and modern systems biology methods differ fundamentally in their philosophy, methodology, and applications. The reductionist perspective has typically addressed the study of living organisms by focusing on isolated components rather than the complex system as a whole [30]. In contrast, systems biology employs a holistic perspective that examines the simultaneous interactions of multiple system elements [30].

Philosophical and Methodological Differences

The reductionist approach to biomarker discovery and therapeutic development typically focuses on single molecules or linear signaling pathways when identifying diagnostic biomarkers or drug targets [30] [33]. This "single-target-based" drug development approach has proven notably less effective for complex diseases, with lower probability of success and higher risk in addressing underlying disease biology [34]. The fundamental limitation of this approach lies in its inability to capture the emergent properties of biological systems that arise from complex networks of interactions [34].

Systems biology, conversely, recognizes that biological function is rarely regulated by a single molecule, but rather emerges from complex interactions among a cell's distinct components [30]. This perspective employs network analysis as a primary tool for representing biological relationships, leveraging mathematical tools from Graph Theory to understand system behavior [30]. In this framework, groups of interacting molecules that regulate discrete functions form biomodules whose interrelations create complex networks [30].

Performance Comparison in Disease Research

The practical differences between these approaches become evident when examining their application to complex disease research. A systems biology study of colorectal cancer (CRC) exemplifies the power of the network-based approach. Researchers identified 848 differentially expressed genes between normal and cancerous tissue, then constructed a protein-protein interaction (PPI) network which revealed 99 hub genes with high connectivity [33]. Clustering analysis dissected this network into seven interactive modules, providing a systems-level view of the molecular interactions driving CRC progression [33]. This approach identified several genes with high centrality in the PPI network that contribute to CRC progression, including CCNA2, CD44, and ACAN, which were found to correlate with poor patient prognosis [33].